Steady-state Non-Line-of-Sight Imaging

- Wenzheng Chen

-

Simon Daneau

-

Fahim Mannan

- Felix Heide

CVPR 2019 (Oral)

We demonstrate it is possible to recover hidden objects with continuous illumination and conventional cameras.

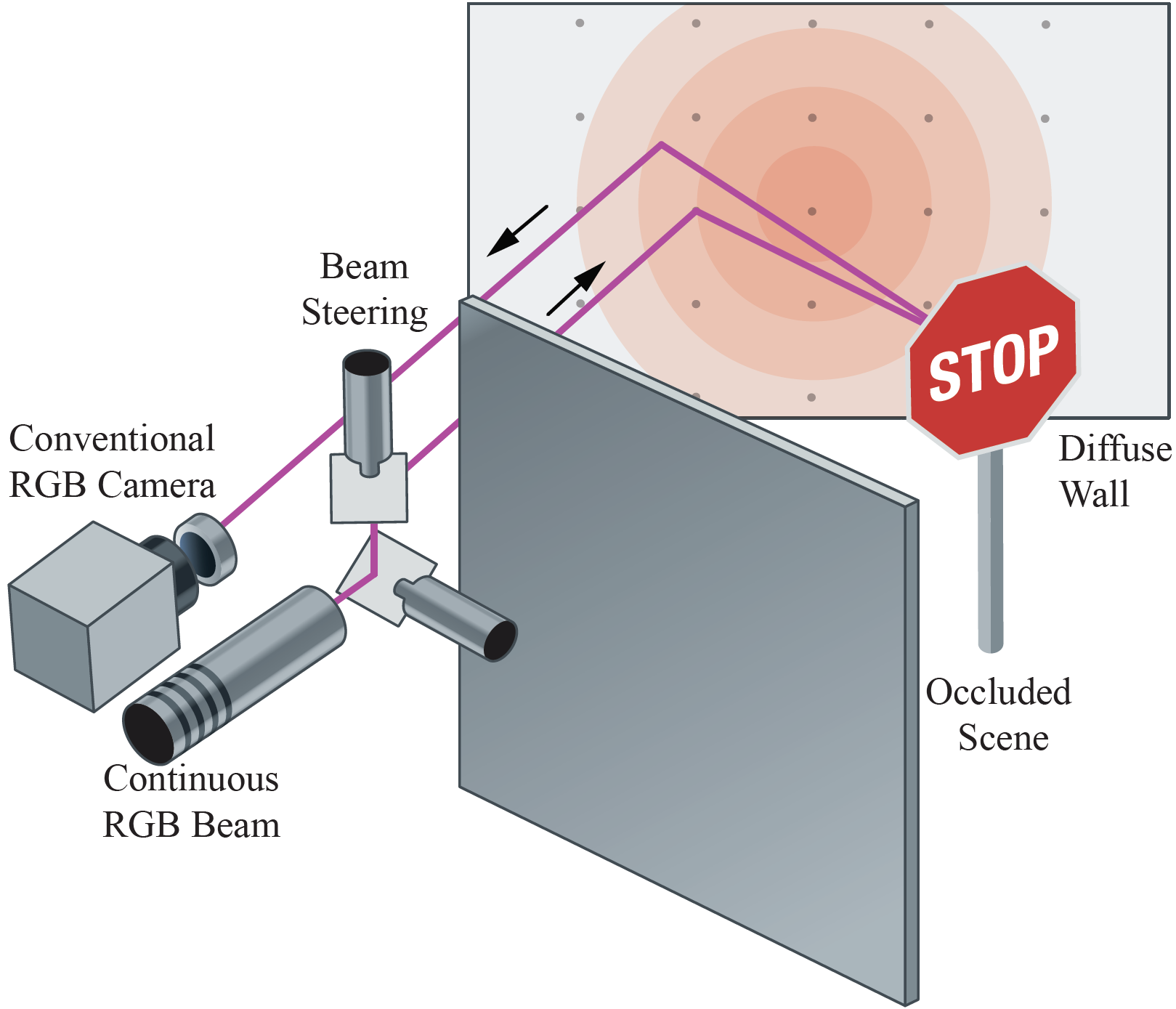

Conventional intensity cameras recover objects in the direct line-of-sight of the camera, whereas occluded scene parts are considered lost in this process. Non-line-of-sight imaging (NLOS) aims at recovering these occluded objects by analyzing their indirect reflections on visible scene surfaces. Existing NLOS methods temporally probe the indirect light transport to unmix light paths based on their travel time, which mandates specialized instrumentation that suffers from low photon efficiency, high cost, and mechanical scanning. We depart from temporal probing and demonstrate steady-state NLOS imaging using conventional intensity sensors and continuous illumination. Instead of assuming perfectly isotropic scattering, the proposed method exploits directionality in the hidden surface reflectance, resulting in (small) spatial variation of their indirect reflections for varying illumination. To tackle the shapedependence of these variations, we propose a trainable architecture which learns to map diffuse indirect reflections to scene reflectance using only synthetic training data. Relying on consumer color image sensors, with high fill factor, high quantum efficiency and low read-out noise, we demonstrate high-fidelity color NLOS imaging for scene configurations tackled before with picosecond time resolution.

Paper

Wenzheng Chen, Simon Daneau, Fahim Mannan, Felix Heide

Steady-state Non-Line-of-Sight Imaging

CVPR 2019 (oral)

Selected Results

Experimental geometry and albedo reconstructions for the special case of planar objects.

The first column shows a conventionally painted road sign. The second and third columns are objects with diamond grade retroreflective surfaces which, although designed to be retroreflective, contain faint specular components visible in the measurements. The last column shows the reconstruction for an engineering-grade retroreflective sign. Our method runs at around two seconds including capture and reconstruction, and achieves high resolution results without temporal sampling.

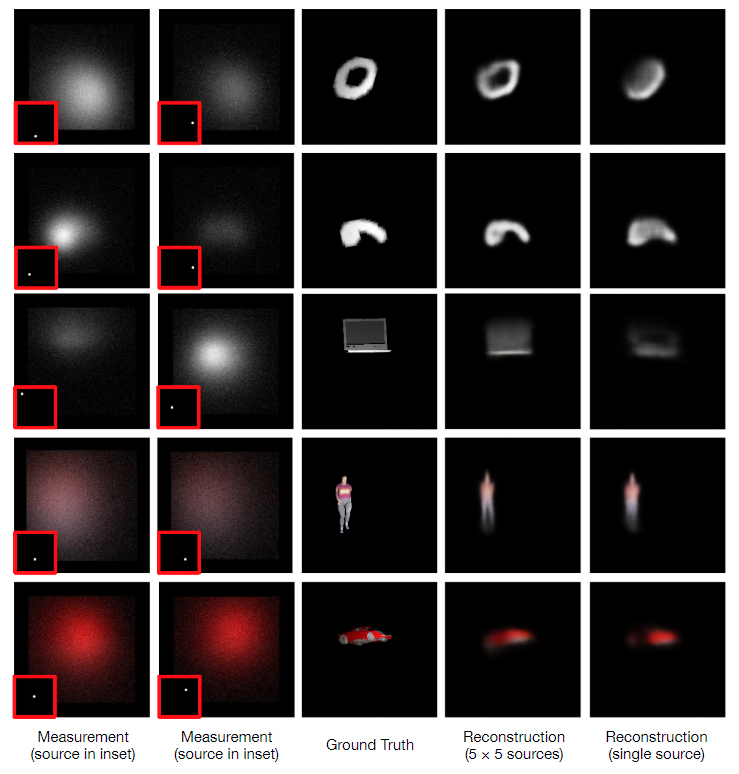

Qualitative results on synthetic data (including sensor noise simulation).

The first two columns show examples of 25 rendered indirect reflection maps, the measurements which are taken as input by the propose method. The third column shows the unknown scene (orthogonal projection). The last two columns show reconstructions by 5 × 5 and 1 × 1 indirect reflection maps, respectively. While denser beam sampling on the wall results in higher recovery performance, even a single sampling position does provide enough information to perform recovery.

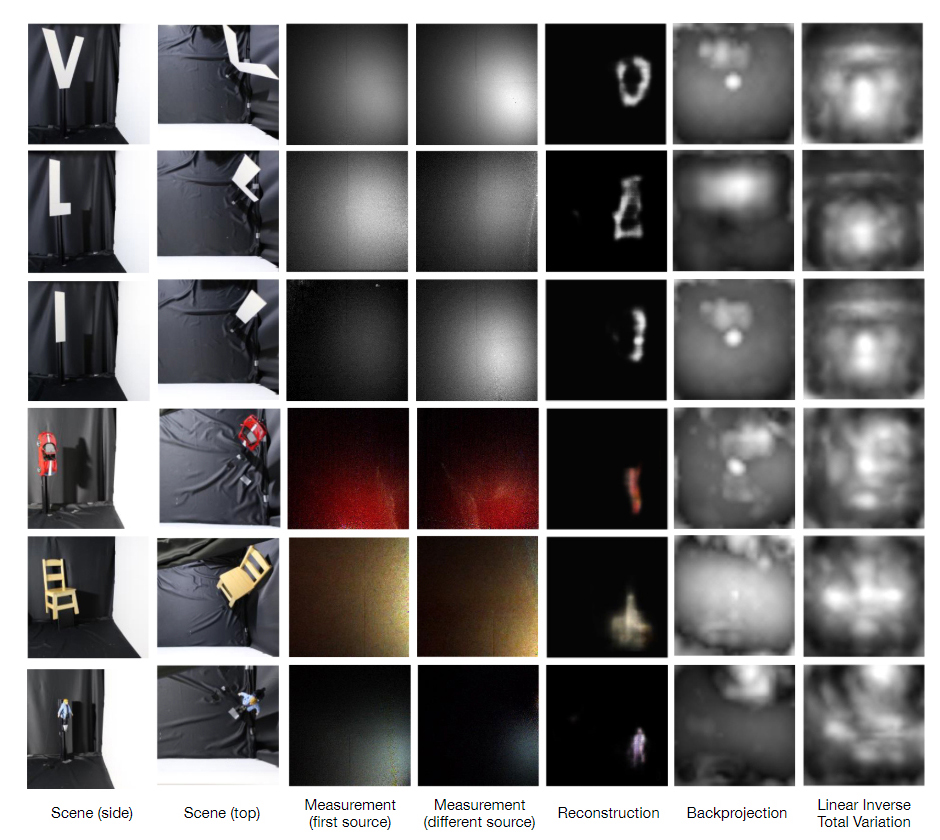

Experimental albedo reconstructions for various objects.

The first two columns show side view and top view of the unknown hidden scene. The following two columns show examples of captured measurement data for two different light source positions. We demonstrate in the last three rows reconstruction results of our method and also compare against backprojection (without filtering) and Linear Inverse Optimization using a Total Variation prior. For all the methods, we show the orthogonal projection on the wall.

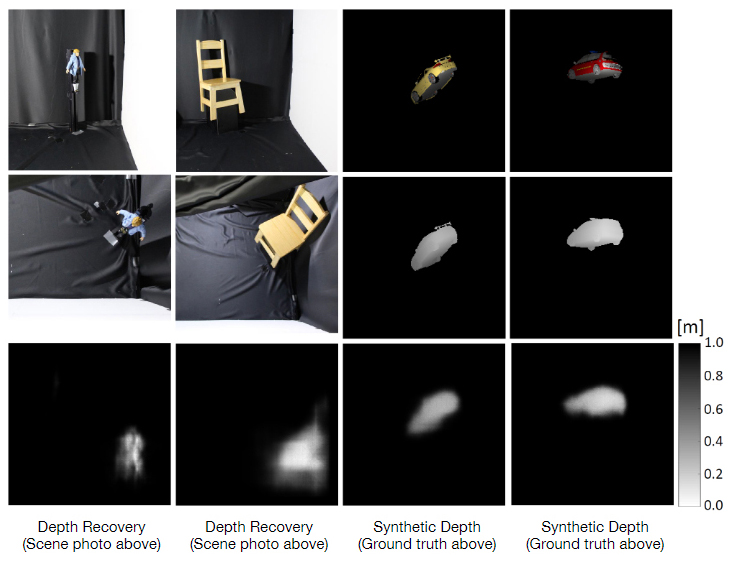

Learning Hidden Geometry Reconstruction.

Our method allows not only for albedo recovery, but also geometry reconstruction from steady-state indirect reflections. Depth recovery of hidden scenes is demonstrated in the first two columns. From top to bottom: side and top view photographs of the hidden scene, and reconstructed depth map in meters. The last two columns show synthetic results. From top to bottom: orthogonal view of hidden scene, ground truth depth of hidden scene, and reconstructed depth.

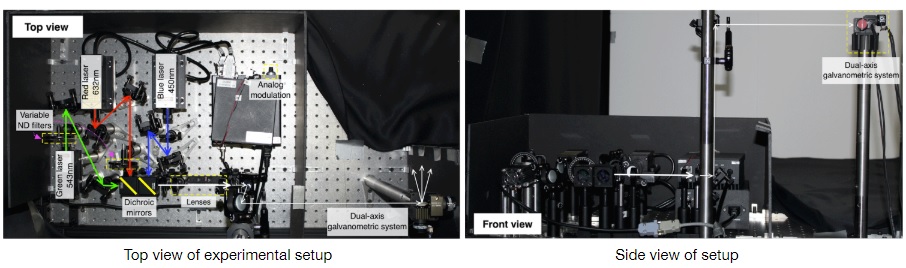

Prototype RGB laser source showing the optical path which ends in a dual-axis galvo.

Variable neutral density (ND) filters and one analog modulation are used to control the individual power of lasers. Long focal lenses combination and several irises reduce the beam diameter. Only the white light path is shown. The camera is placed right next to the galvo.