The Differentiable Lens: Compound Lens Search over Glass Surfaces and Materials for Object Detection

Rather than designing lenses in isolation, a more comprehensive approach that takes into account the complete image acquisition and processing pipeline can generally lead to better imaging quality or performance on vision tasks.

In this work, we introduce a differentiable lens simulation model and an optimization method to optimize compound lenses specifically for downstream vision tasks, and apply them to automotive object detection.

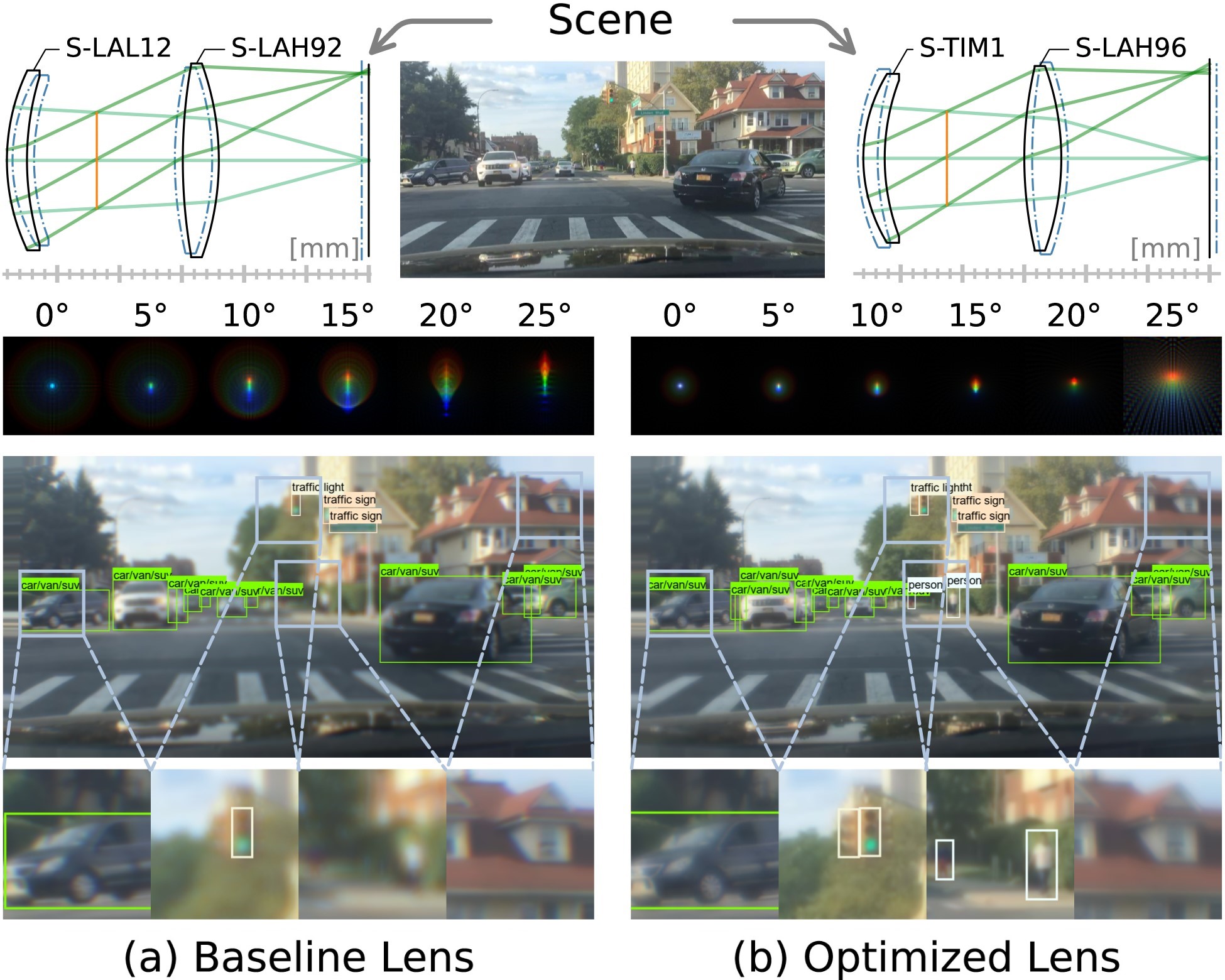

We propose an optimization strategy to address the challenges of lens design — notorious for non-convex loss function landscapes and many manufacturing constraints — that are exacerbated in joint optimization tasks. We find that even simple two-element lenses such as the ones pictured here can be compelling candidates for low-cost automotive object detection despite a noticeably worse image quality, provided that the object detector is trained on realistically aberrated images, and even more so when the lens is optimized jointly.

The Differentiable Lens: Compound Lens Search over Glass Surfaces and Materials for Object Detection

Geoffroi Côté, Fahim Mannan, Simon Thibault, Jean-François Lalonde, Felix Heide

CVPR 2023

Joint Optimization Framework

Our method approaches the lens design and object detection subtasks jointly rather than in isolation. To this end, during the training phase, we simulate realistic aberrations on dataset images before they enter the object detector.

The lens parameters (curvatures, spacings, and glass materials) are optimized with respect to a loss function similar to what is used in traditional lens design — targeting optical performance and geometrical constraints via exact ray tracing — and to the object detection loss.

The object detector parameters are trained concurrently to minimize the object detection loss while adjusting to the lens aberrations.

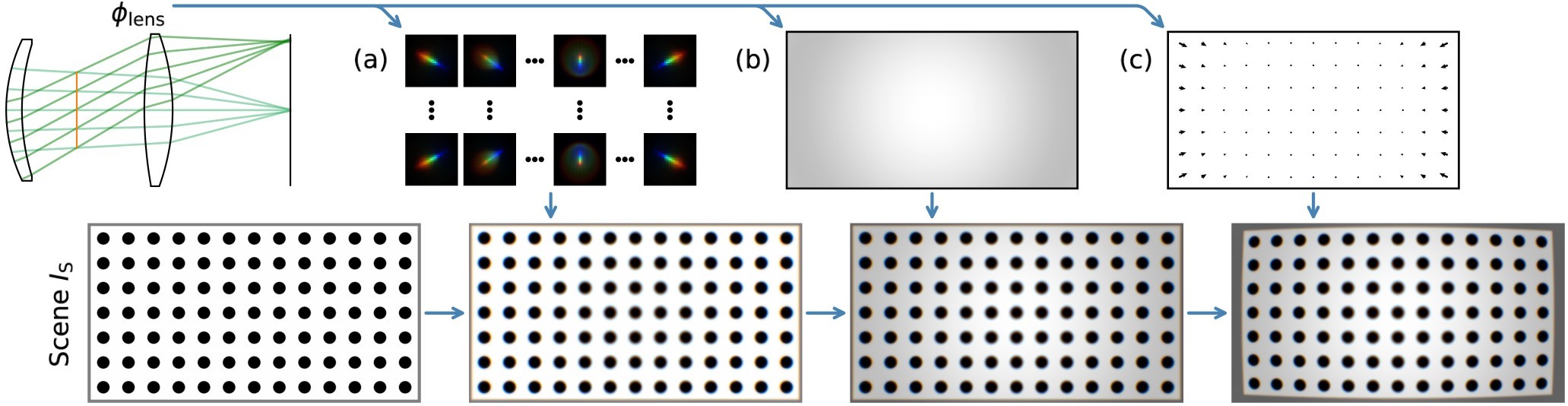

Lens Simulation Model

Our lens simulation model simulates the passage of light through the lens, where natural dataset images are used as examples of real-world scenes. All operations are based on exact ray tracing using Snell’s Law.

To increase numerical efficiency and accuracy, we split the simulation stage into three phases:

(a) We evaluate the PSF for different regions of the original image, then employ a spatially varying convolution to apply the blur-inducing geometrical aberrations.

(b) For relative illumination, we estimate a map of relative illumination factors and multiply it pixel-wise with the image.

(c) To apply distortion, we compute a map of the distortion shifts and warp the image.

Joint Optimization Strategy

We seek to freely optimize all lens variables on downstream tasks without compromising manufacturability.

Our lens optimization strategy includes:

(1) Quantized continuous glass variables — we use auxiliary variables (orange cross marks) to explore the solution space, and replace them with available glass materials (orange squares) through the “gradient pass-through” trick.

(2) Paraxial image solve — to allow the lens to remain mostly in focus throughout optimization, we locate the paraxial image plane with a ray-tracing operation and optimize only the defocus.

(3) Focal length solve — we enforce the desired focal length by solving the rightmost surface profile on the fly.

(4) Various constraints — for manufacturability and stability, we devise a loss based on all ray paths to guarantee feasible air and glass spacings and reasonable ray angles.

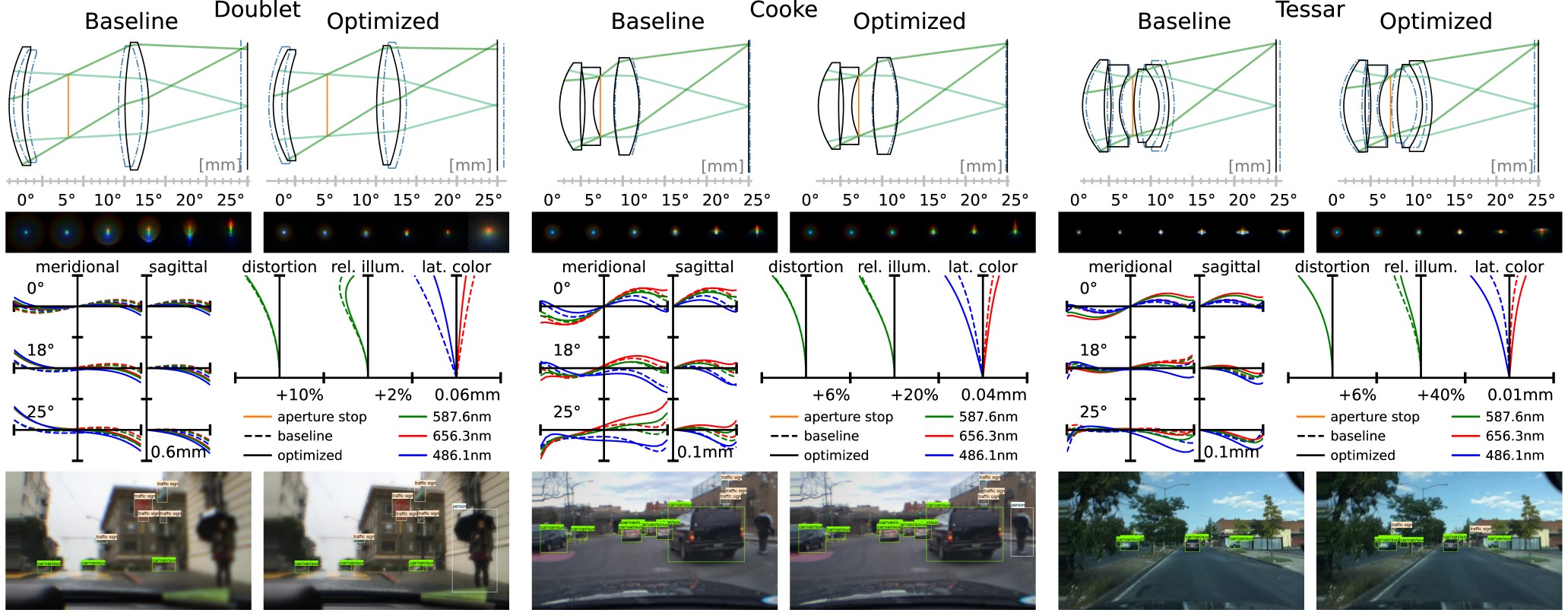

Two-element Doublet Lens

Three-element Cooke Triplet

Four-element Tessar Lens

Automotive Object Detection

We validate the proposed method on the task of automotive object detection. We consistently observe improvements in detection performance even when reducing the number of elements in a given lens stack. We note that the optimized lenses improve object detection performance despite a mean spot size similar to or worse than the baseline lenses, meaning that they trade image quality for additional performance in detection.

Related Publications

[1] Ethan Tseng, Ali Mosleh, Fahim Mannan, Karl St. Arnaud, Avinash Sharma, Yifan Peng, Alexander Braun, Derek Nowrouzezahrai, Jean-François Lalonde, Felix Heide. Differentiable Compound Optics and Processing Pipeline Optimization for End-to-end Camera Design. SIGGRAPH 2021