End-to-end High Dynamic Range Camera Pipeline Optimization

-

Nicolas Robidoux

-

Luis Eduardo Garcia Capel

-

Dong-eun Seo

-

Avinash Sharma

-

Federico Ariza

- Felix Heide

CVPR 2021

With a 280 dB dynamic range, the real world is a High Dynamic Range (HDR) world. Today's sensors cannot record this dynamic range in a single shot. Instead, HDR cameras acquire multiple measurements with different exposures, gains and photodiodes, from which an Image Signal Processor (ISP) reconstructs an HDR image. HDR image recovery for dynamic scenes is an open challenge because of motion and because stitched captures have different noise characteristics, resulting in artefacts that the ISP has to resolve - in real time and at triple-digit megapixel resolutions. Traditionally, hardware ISP settings used by downstream vision modules have been chosen by domain experts. Such frozen camera designs are then used for training data acquisition and supervised learning of downstream vision modules. We depart from this paradigm and formulate HDR ISP hyperparameter search as an end-to-end optimization problem. We propose a mixed 0th and 1st-order block coordinate descent optimizer to jointly learn ISP and detector network weights using RAW image data augmented with emulated SNR transition region artefacts. We assess the proposed method for human vision and image understanding. For automotive object detection, the method improves mAP and mAR by 33% compared to expert-tuning and by 22% compared to recent state-of-the-art. The method is validated in an HDR laboratory rig and in the field, outperforming conventional handcrafted HDR imaging and vision pipelines in all experiments.

Paper

Nicolas Robidoux, Luis Eduardo García Capel, Dong-eun Seo, Avinash Sharma, Federico Ariza, Felix Heide

End-to-end High Dynamic Range Camera Pipeline Optimization

CVPR 2021

HDR Optimization for Human Viewing

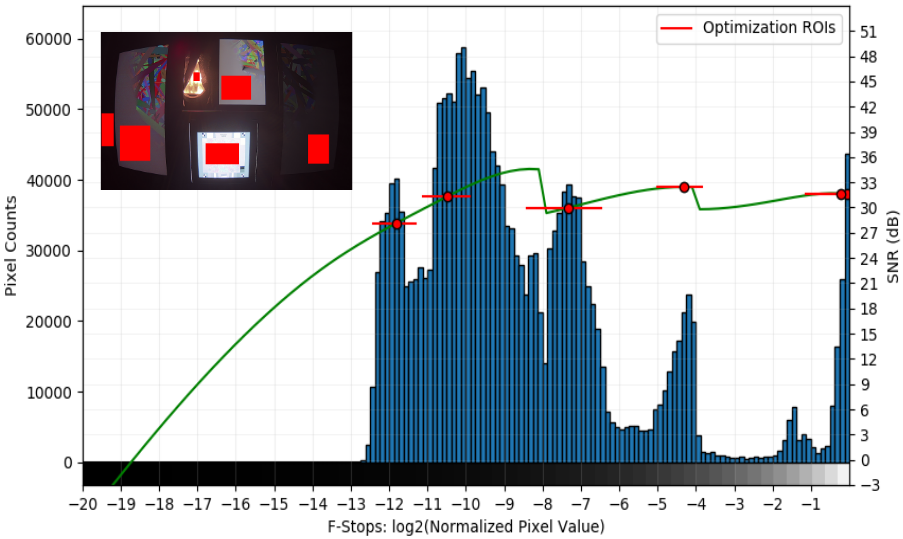

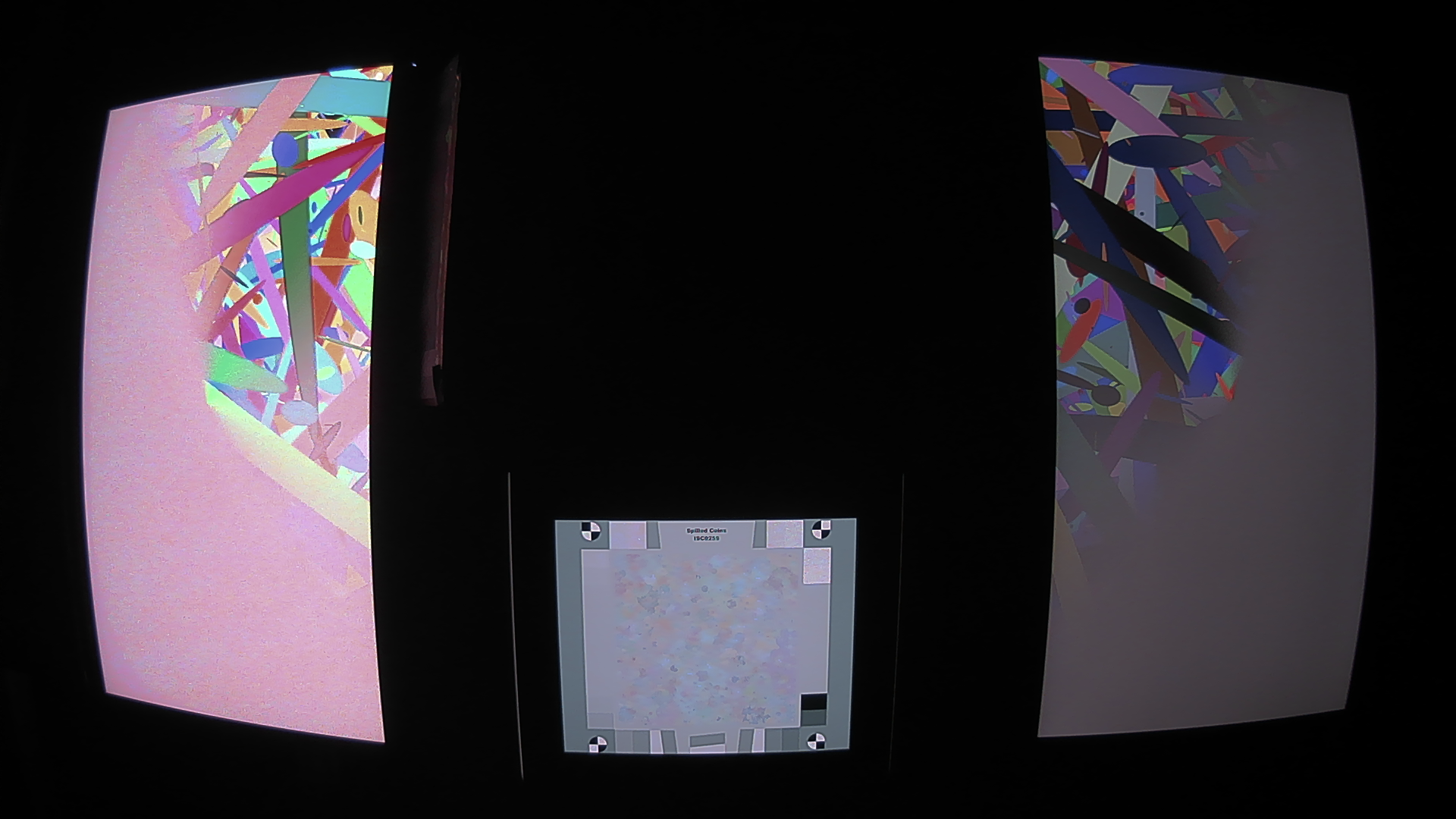

Scenarios used for HDR perceptual IQ optimization.

Seven scenarios used for end-to-end loss-based HDR optimization for perceptual IQ and corresponding ISP output. Top row: Expert-tuned results. Middle row: Results with the proposed method. Bottom row: Zoom-ins where for each triple, the first enlargement shows a crop of one of the captures in the top row (expert-tuned), the second, optimized with the proposed method (from the second row), and the third, the corresponding area of the displayed chart (second triple also shows monitor logo outside of the chart). The proposed method preserves detail at all luminance levels. (Gamma correction applied to facilitate crop visualization.)

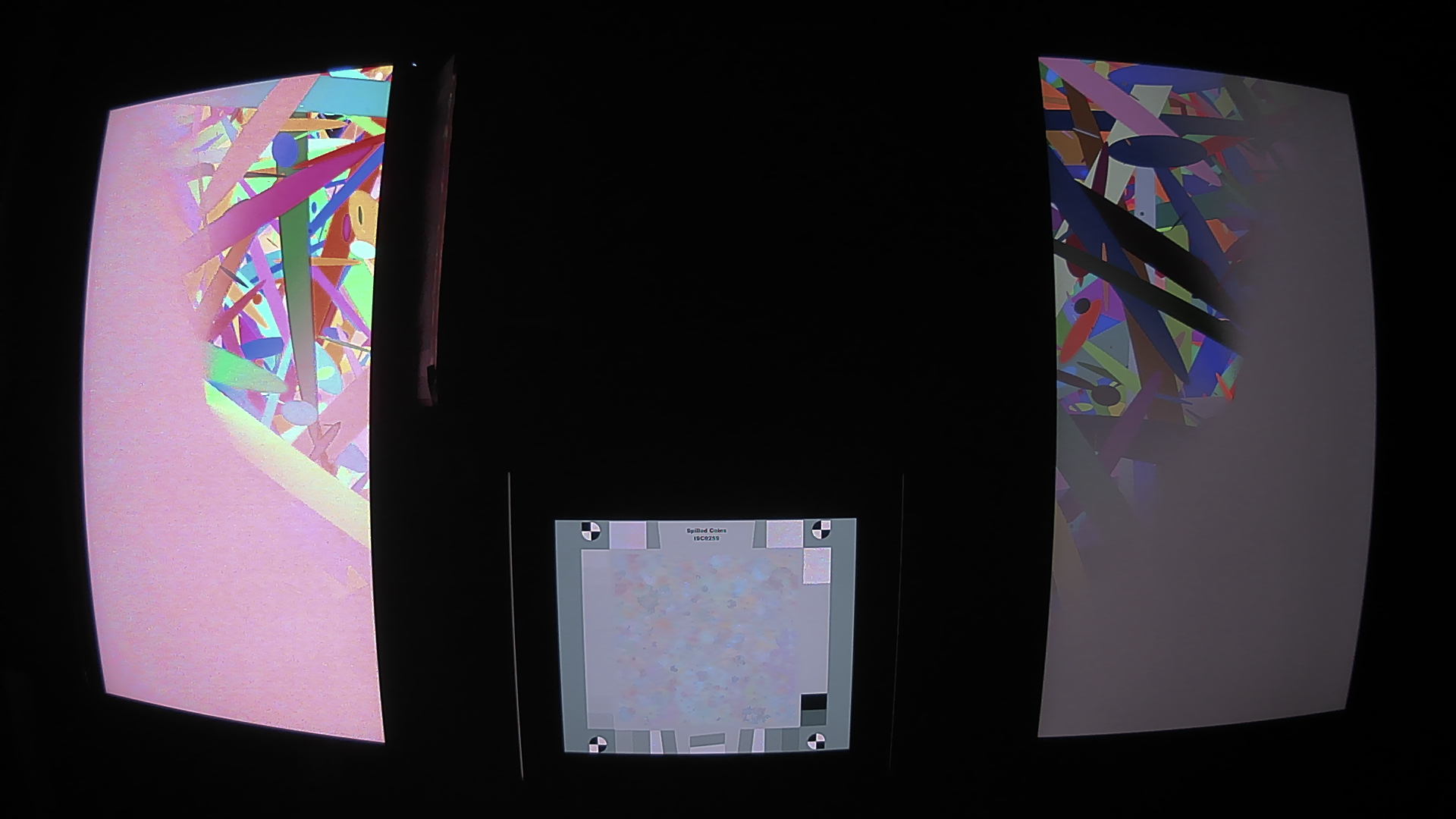

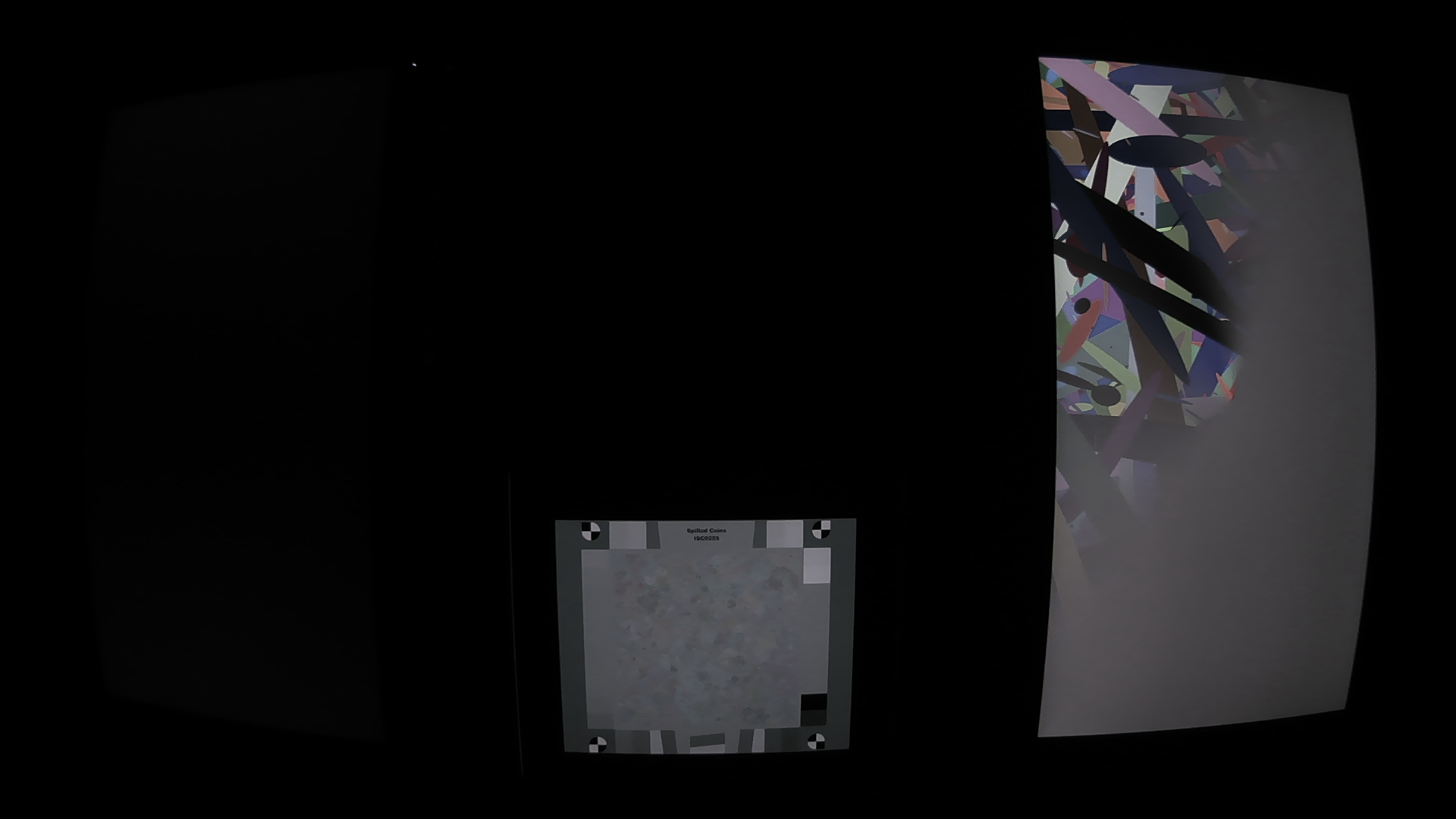

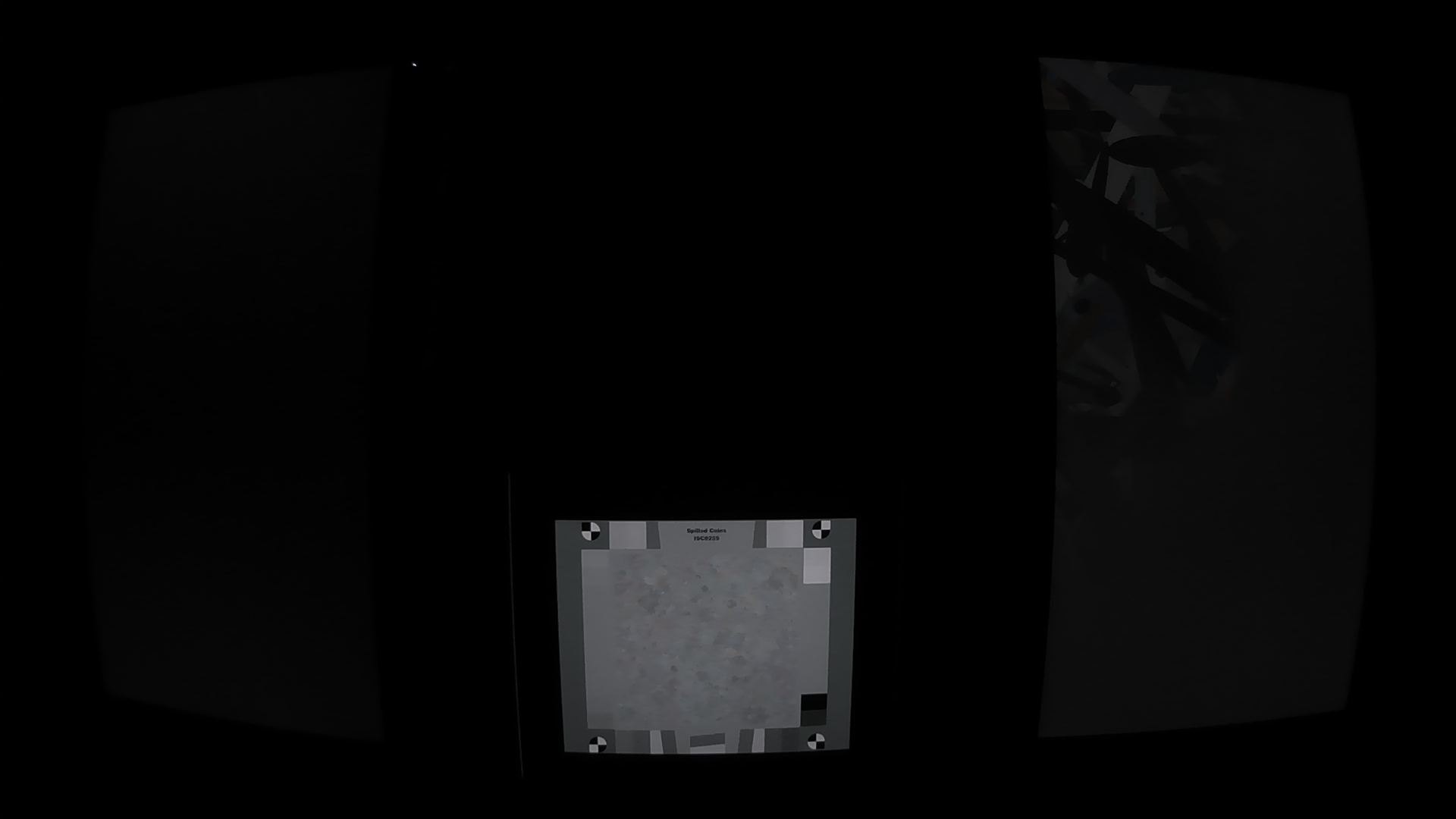

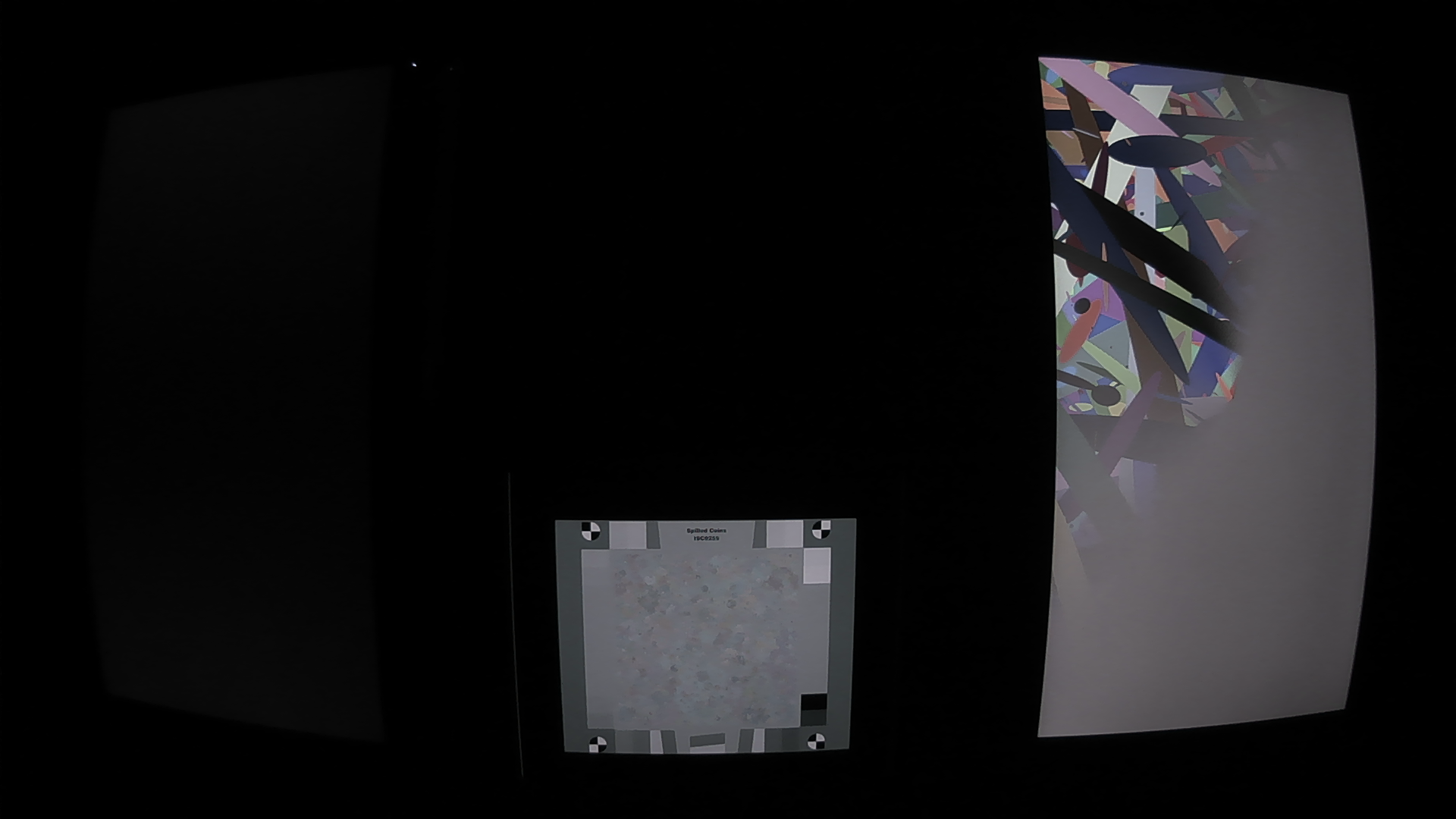

Results of ISP optimization for perceptual IQ.

Results of ISP optimization for human viewing. Top two rows: HDR lab scene. Bottom two rows: Real-life HDR scene. Rows 1 and 3: Results with expert-tuned settings. Rows 2 and 4: Results with settings obtained with the proposed method. The proposed method provides more detail and better dynamic range compression and local contrast. Please zoom in. (Gamma correction applied to facilitate crop visualization.)

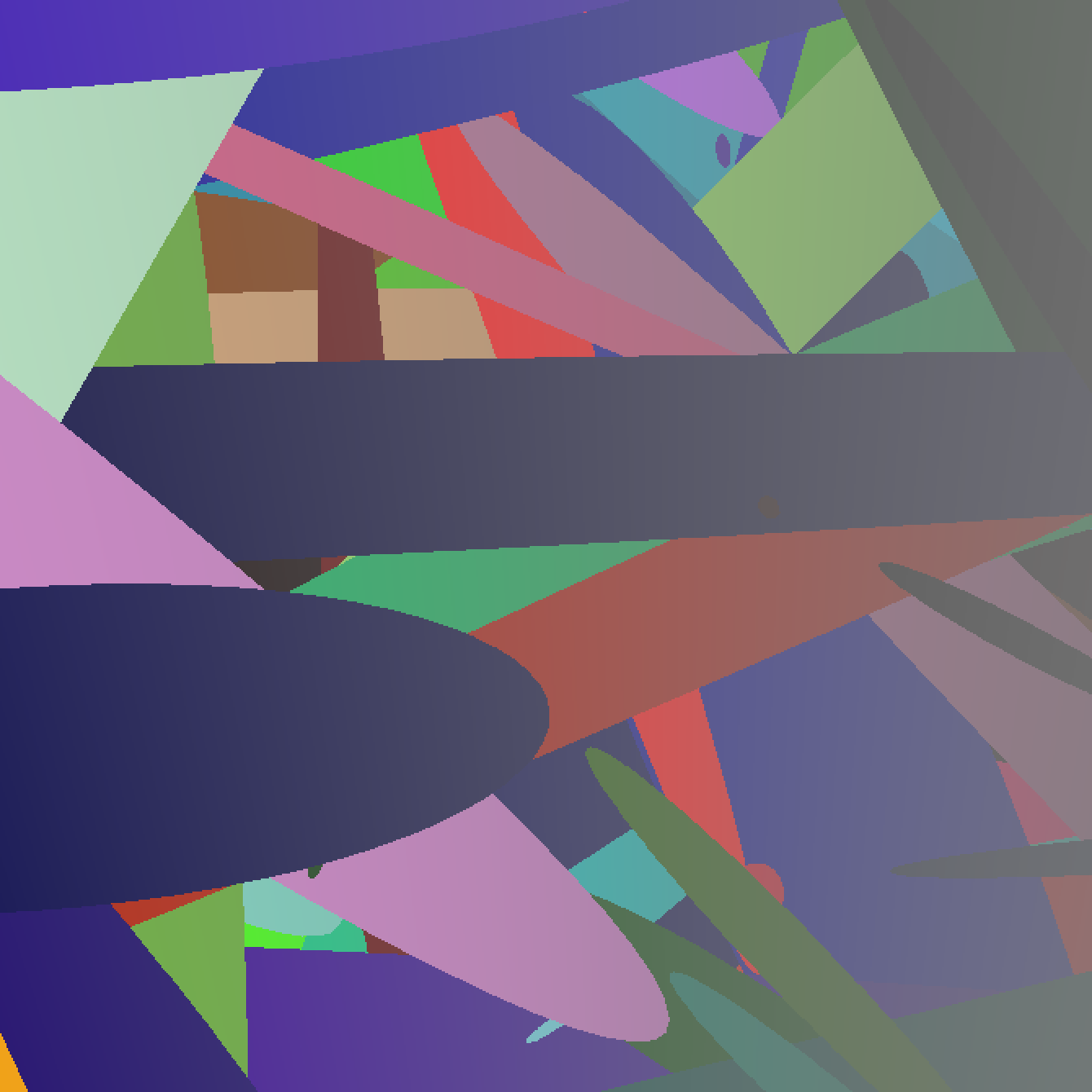

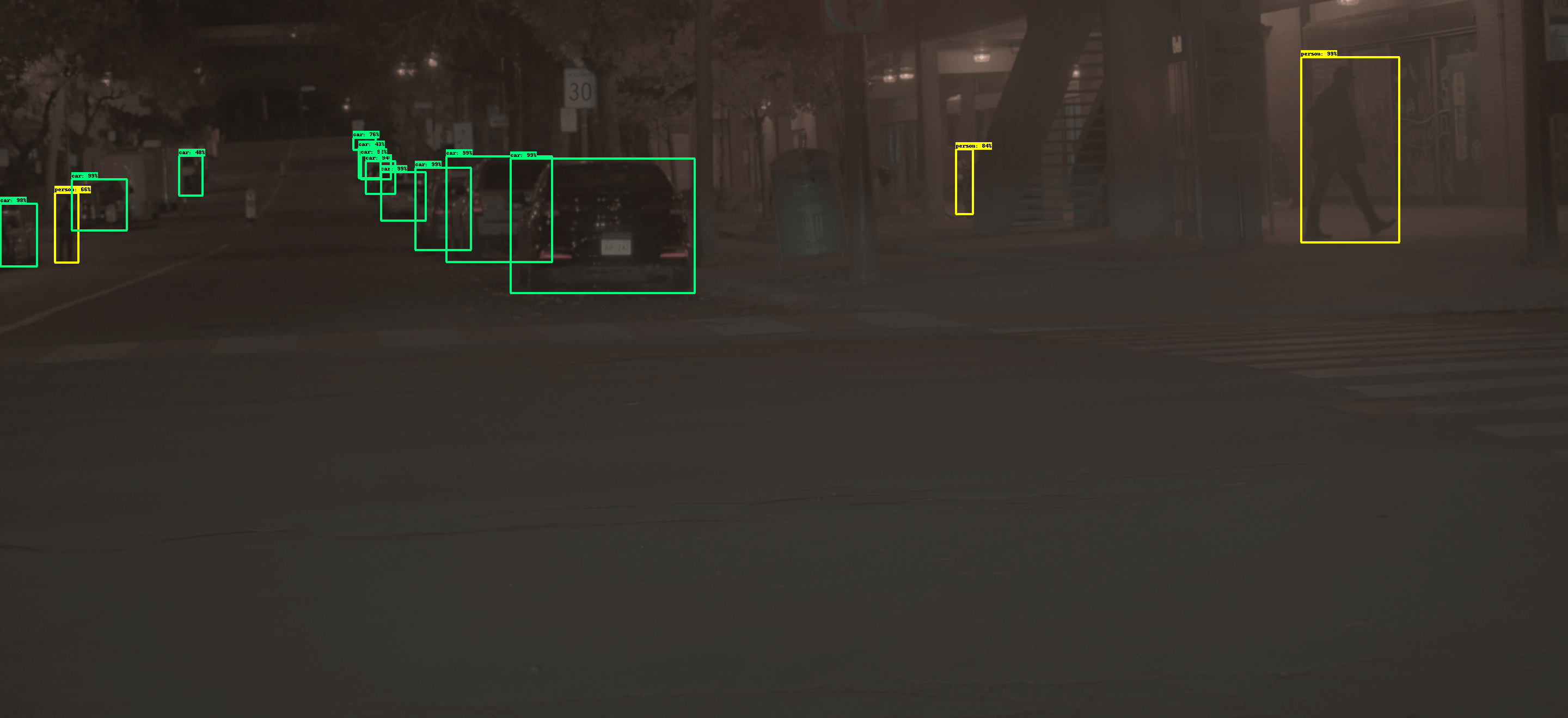

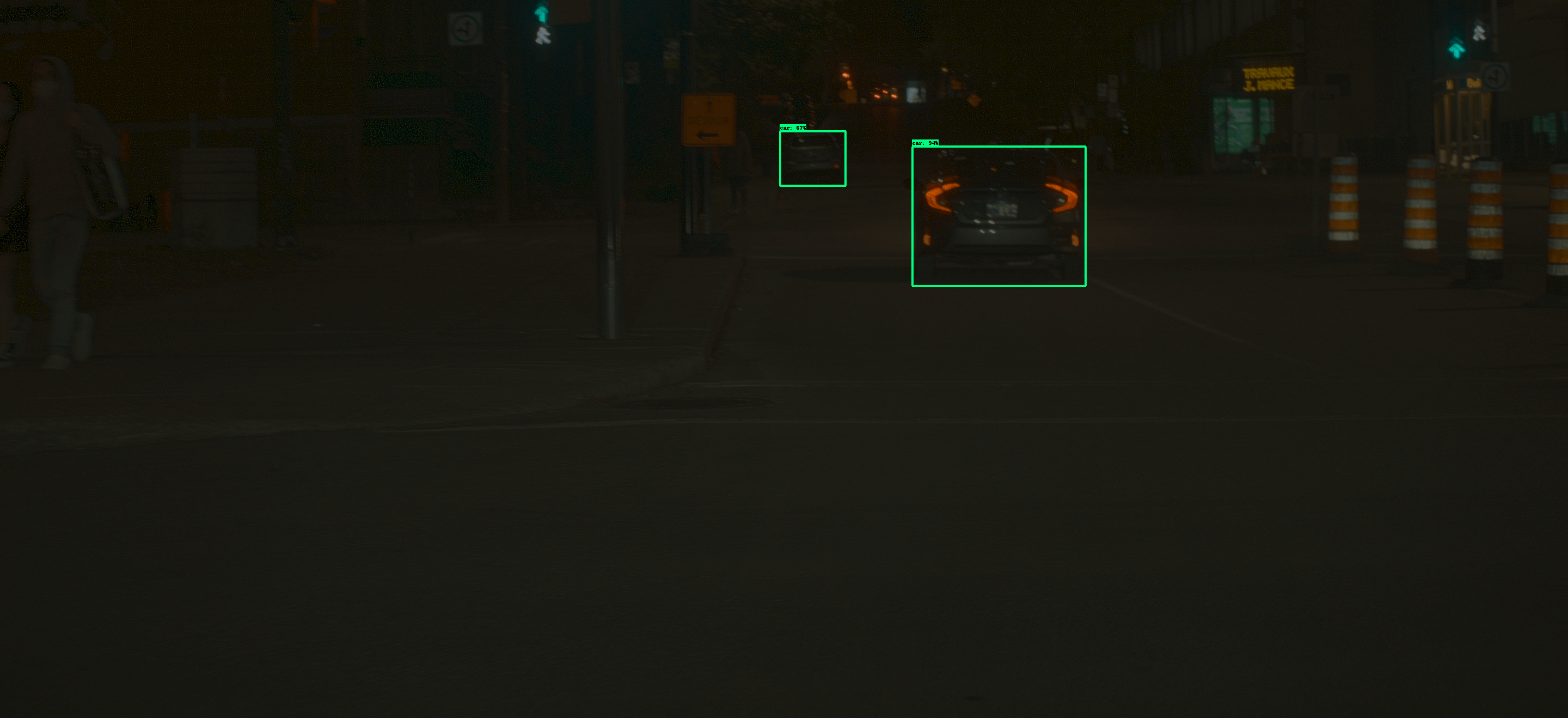

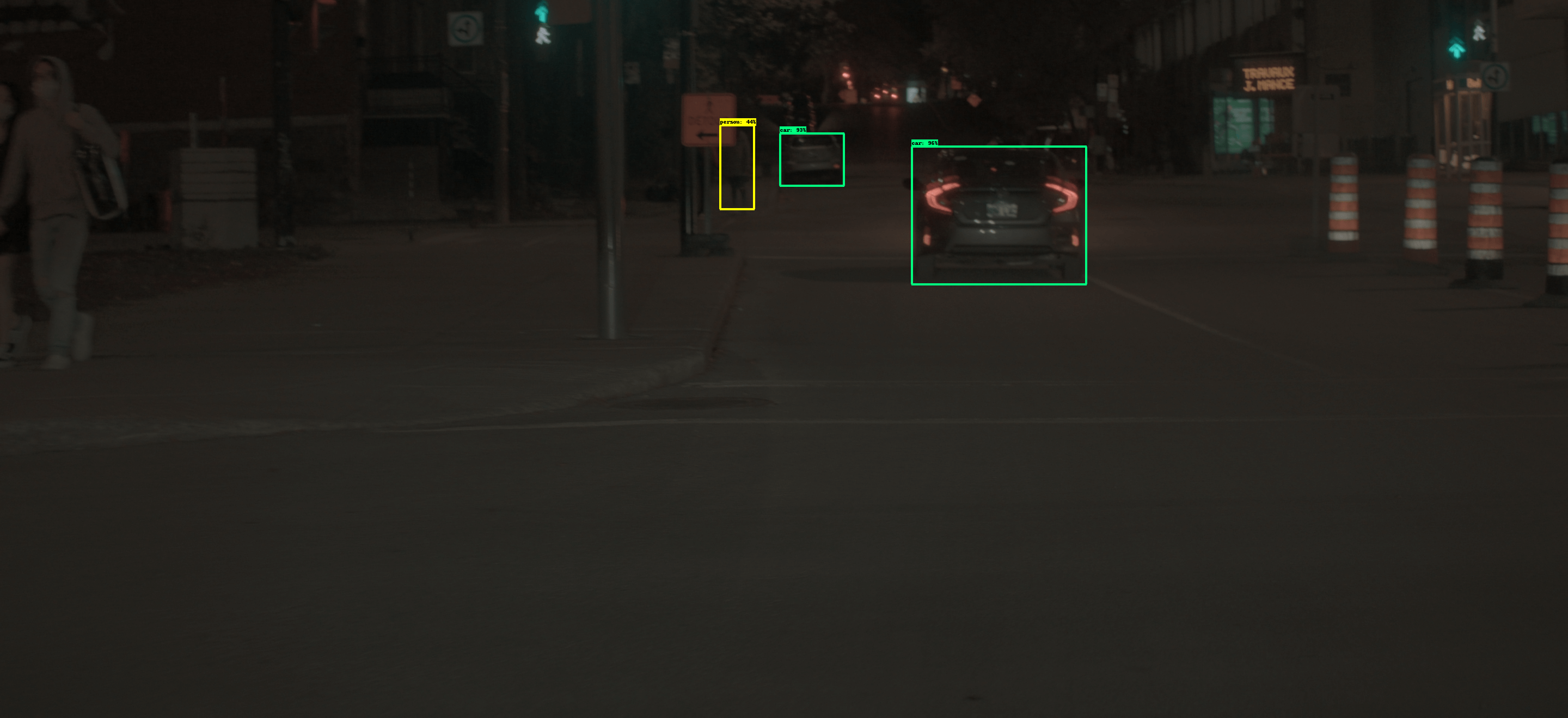

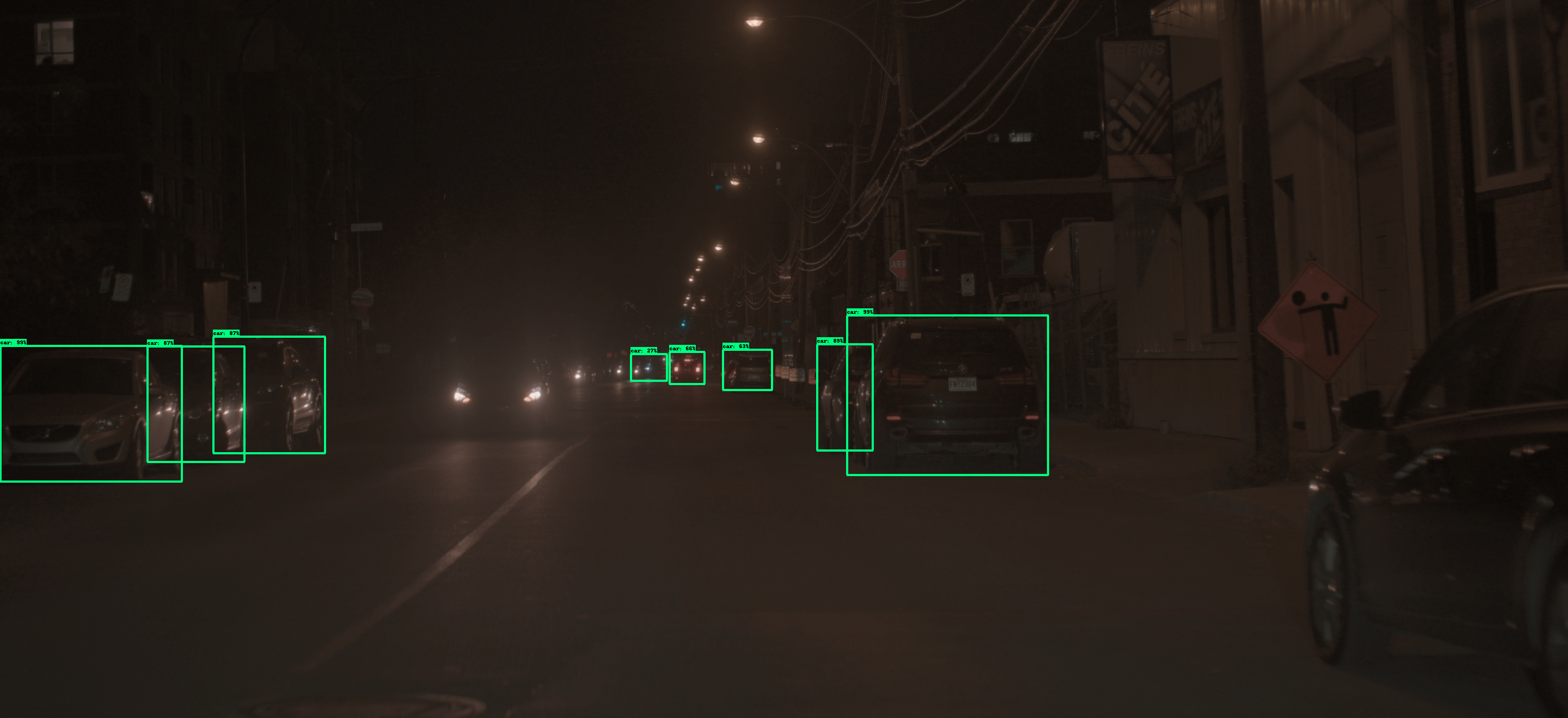

Joint HDR and CNN optimization for object detection and classification

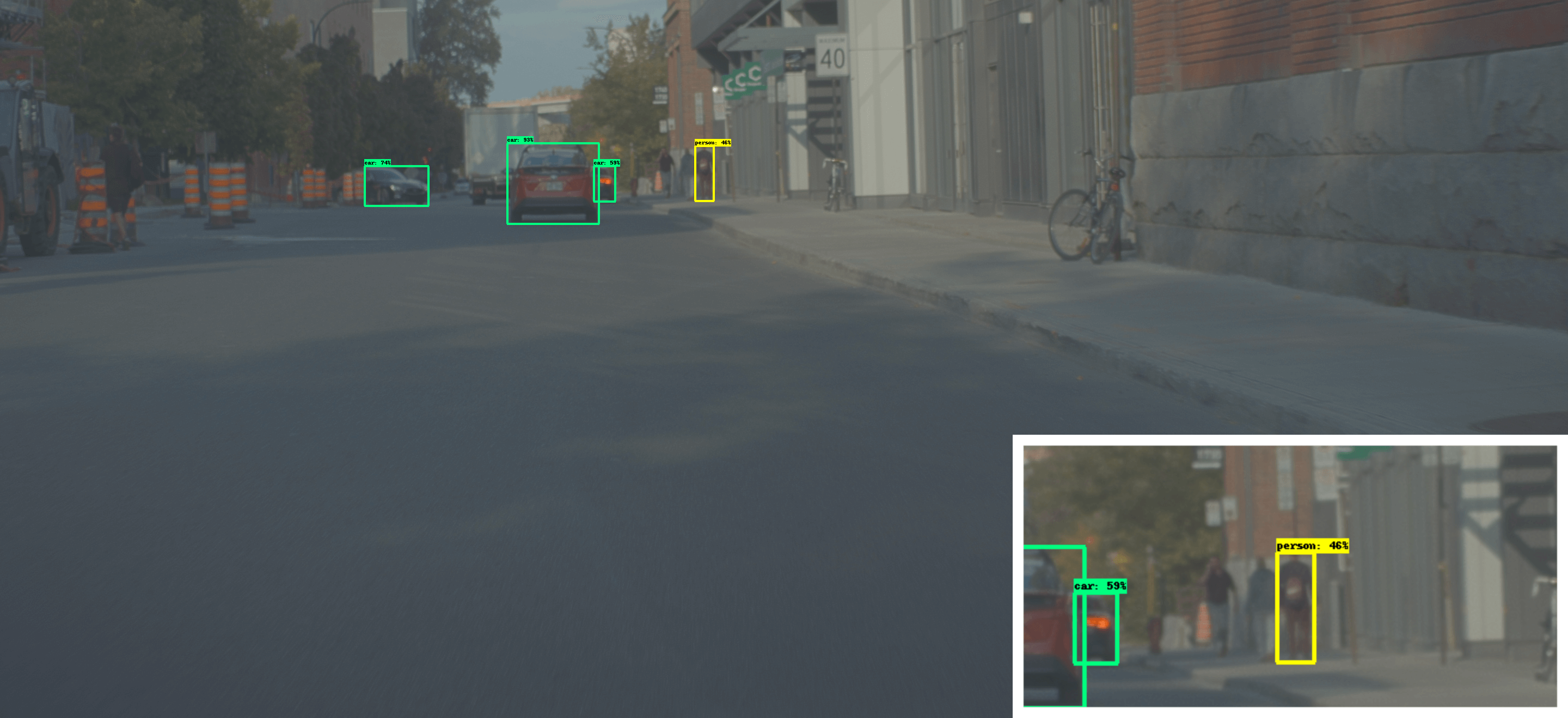

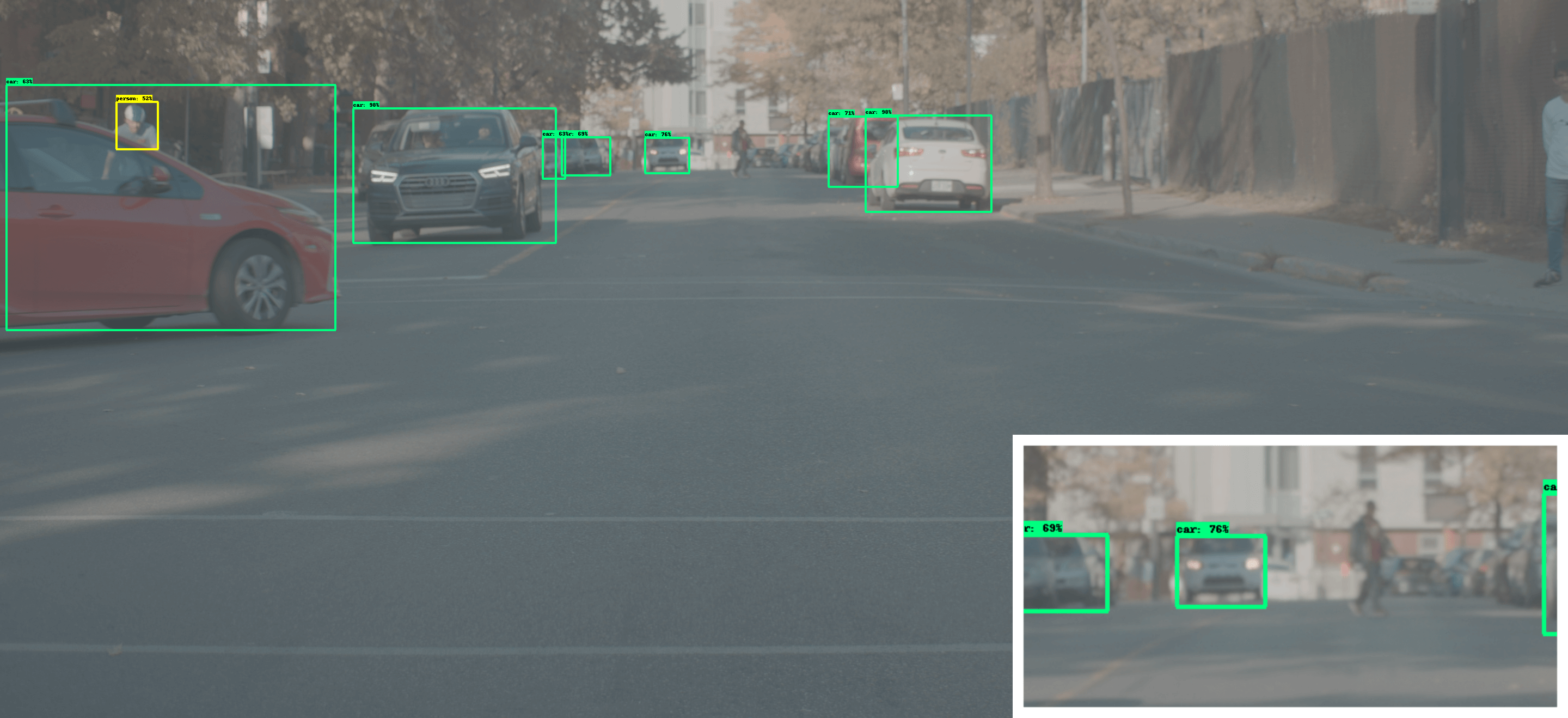

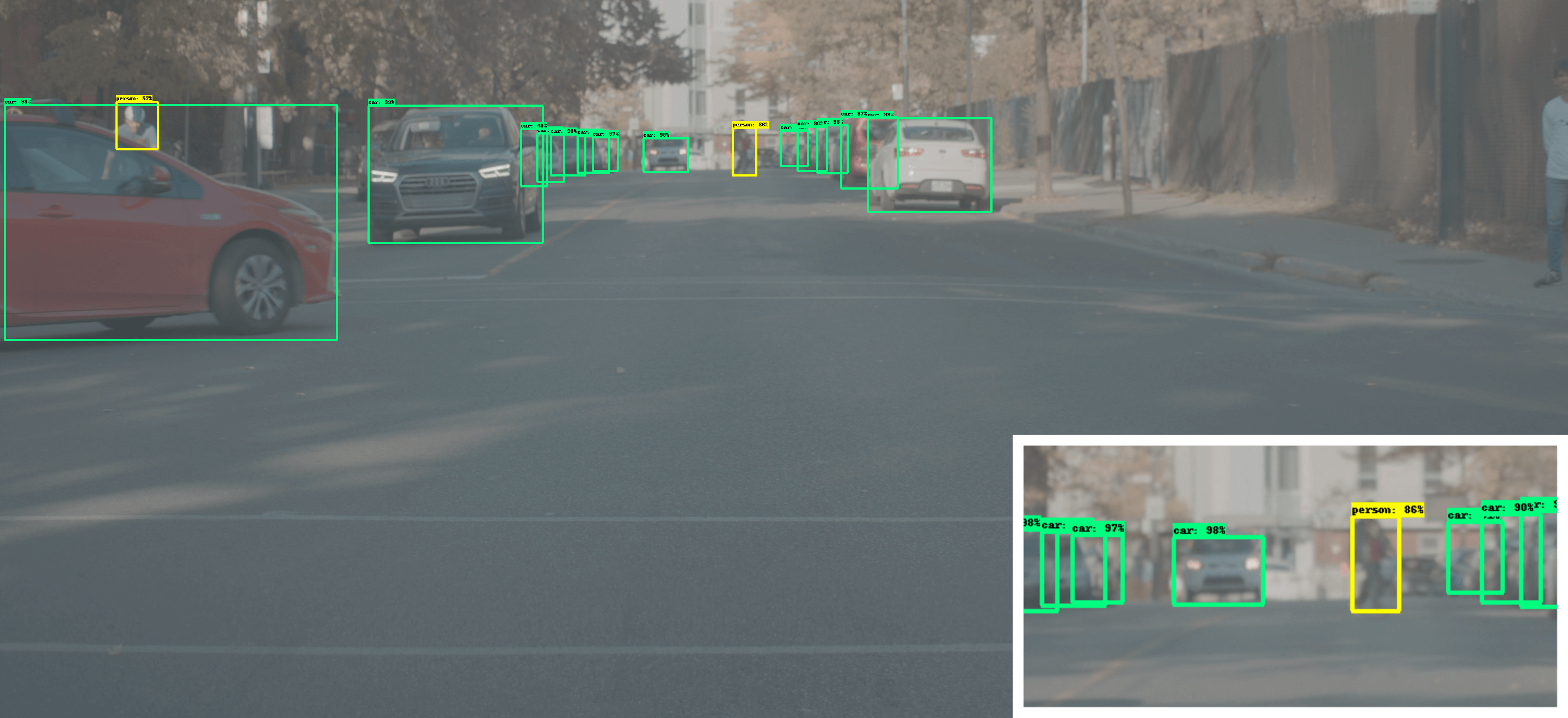

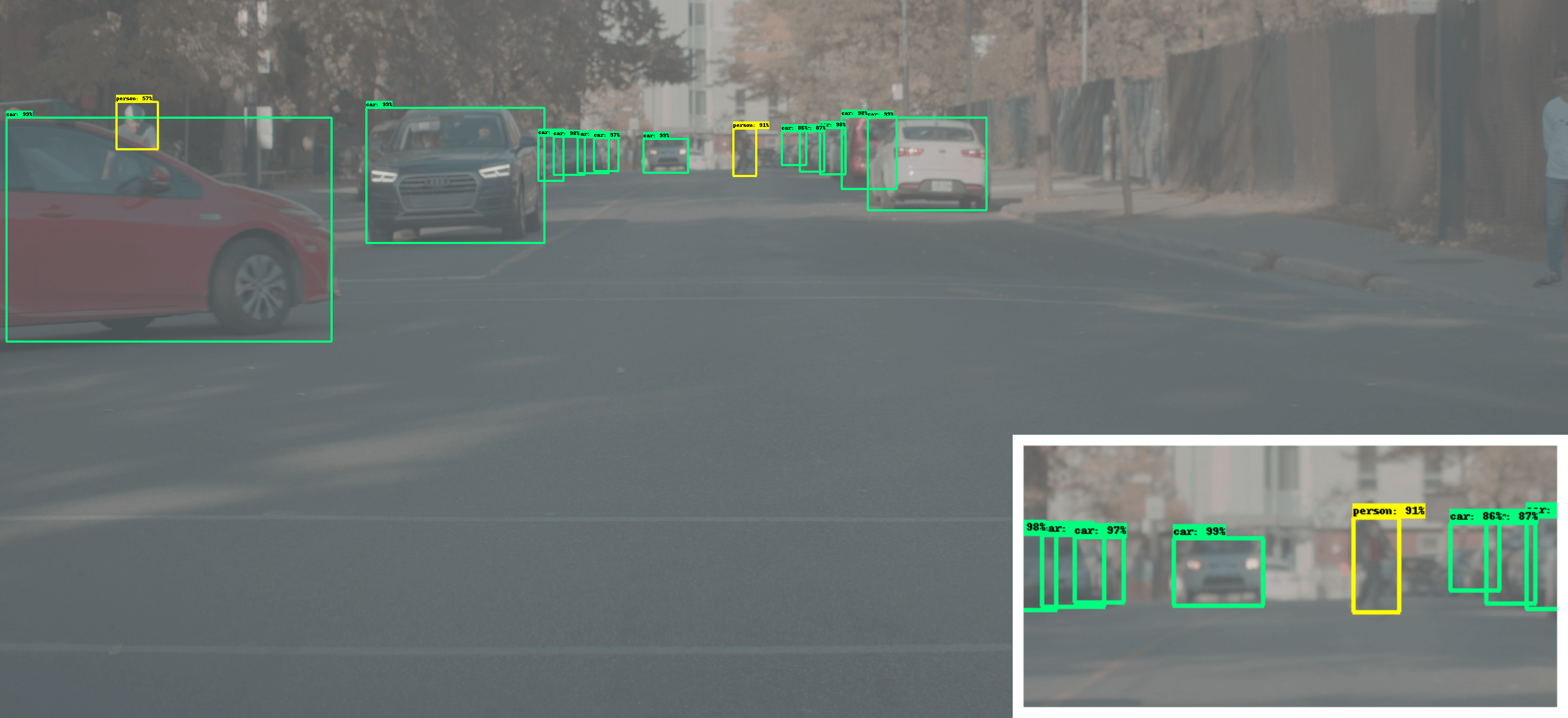

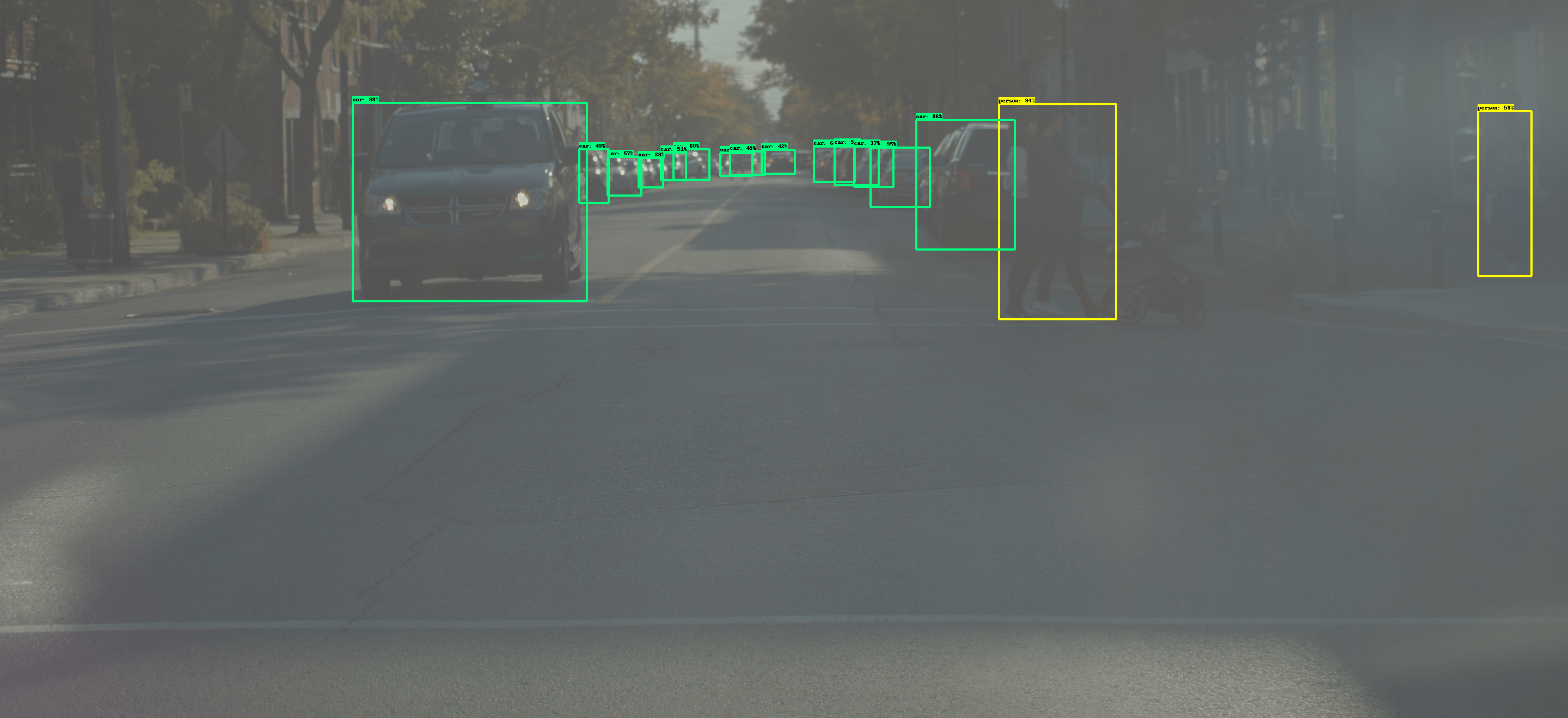

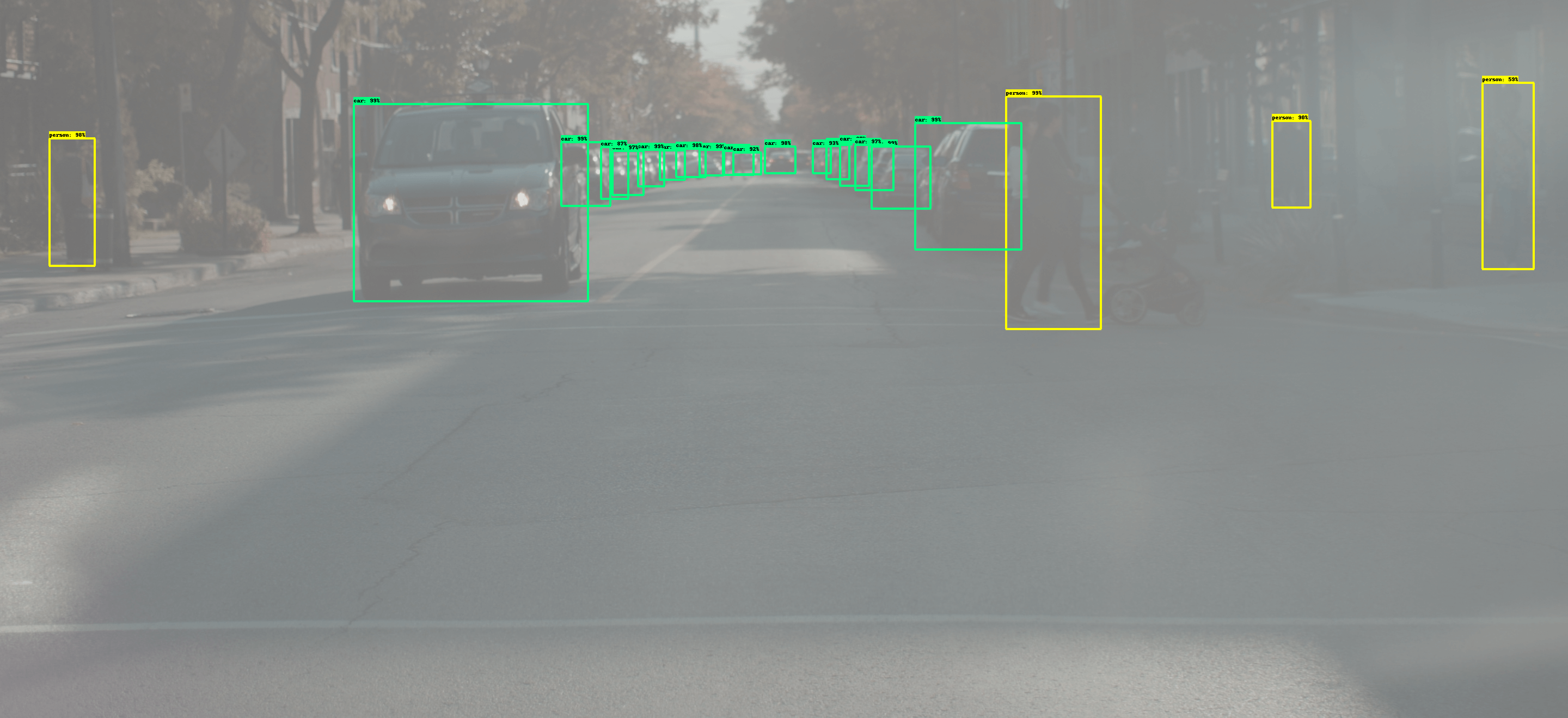

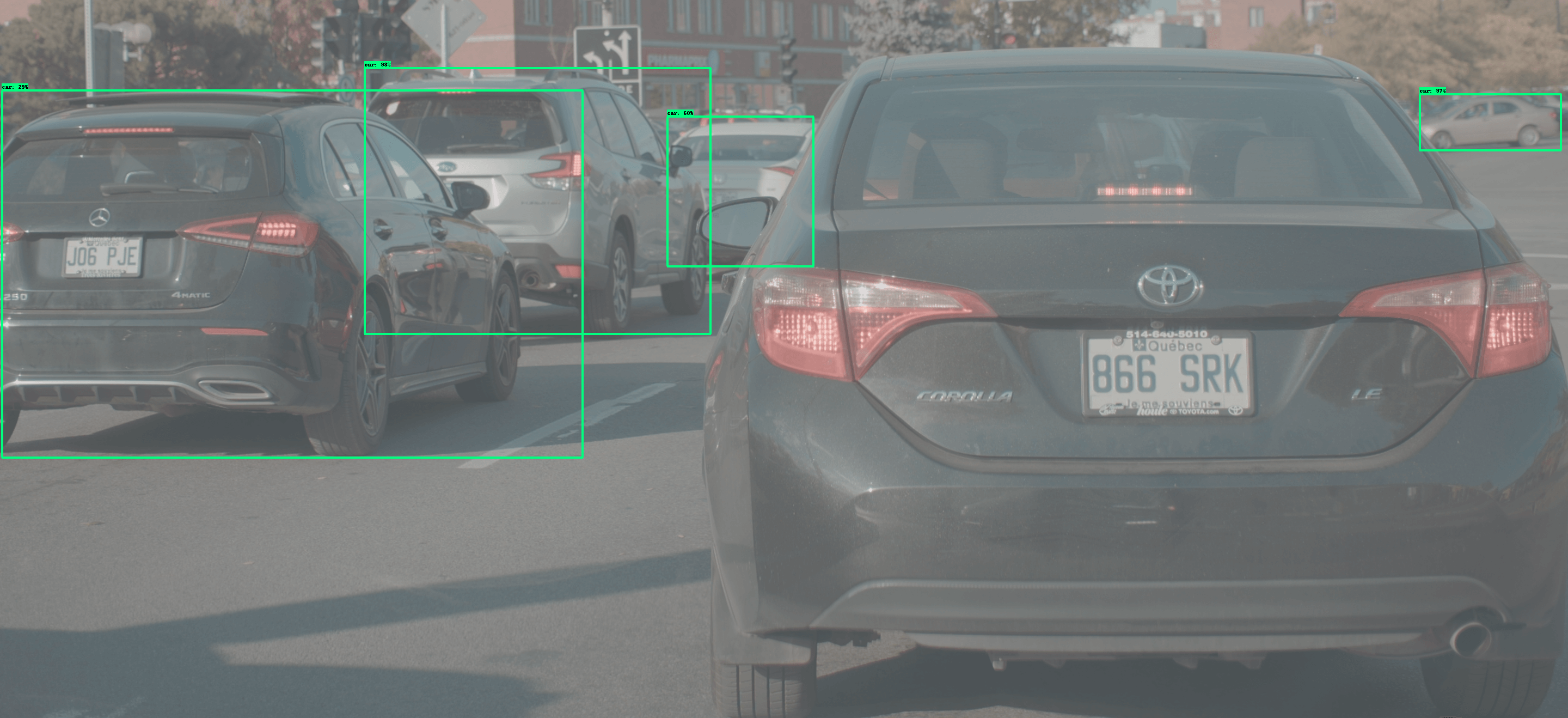

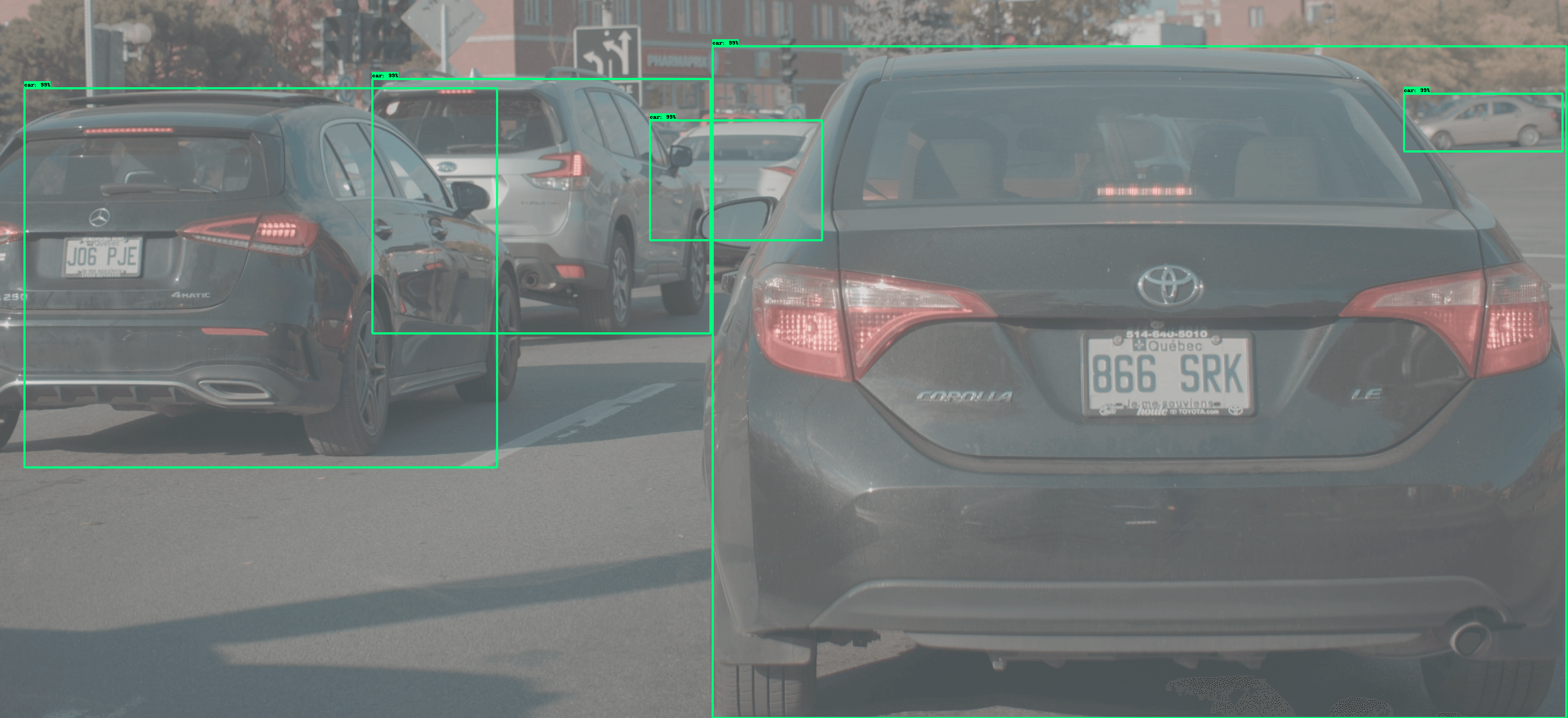

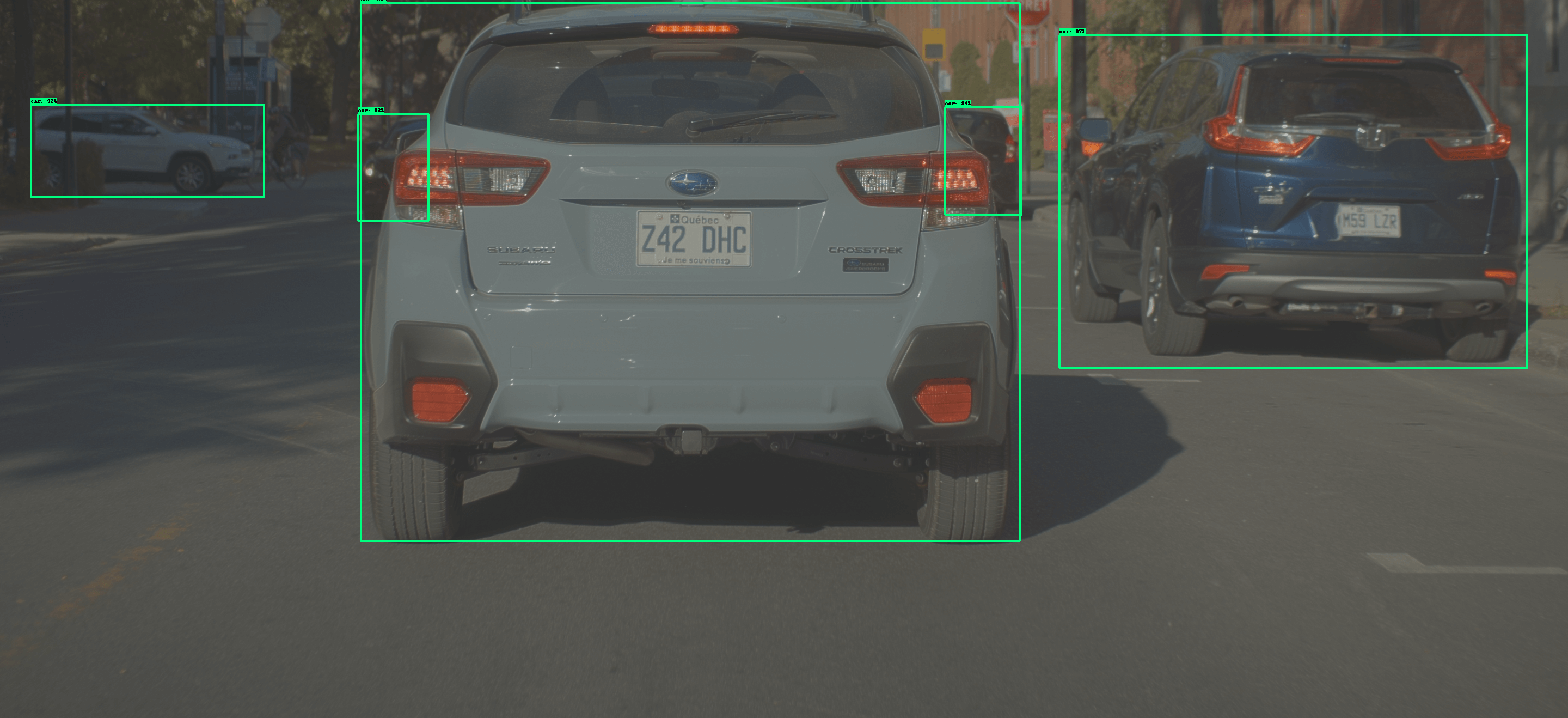

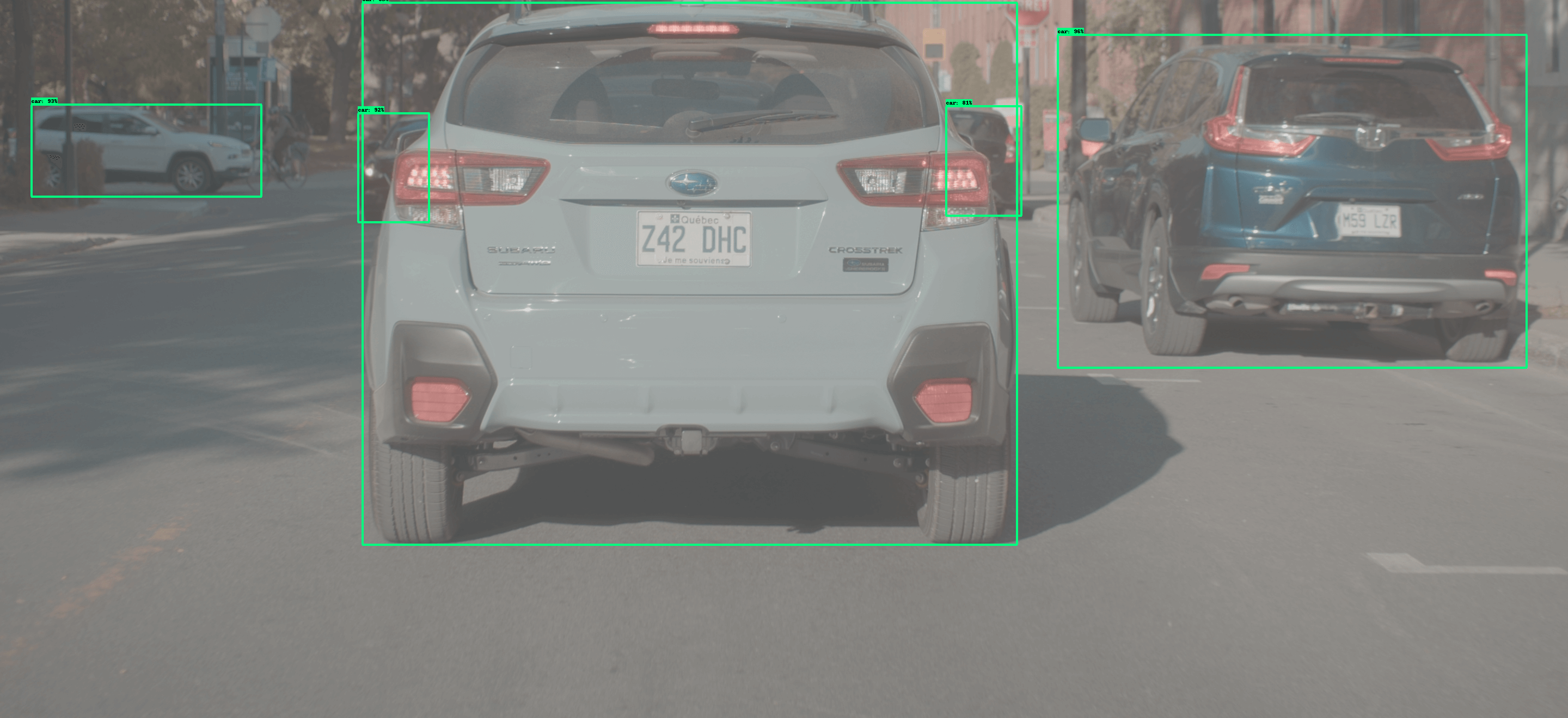

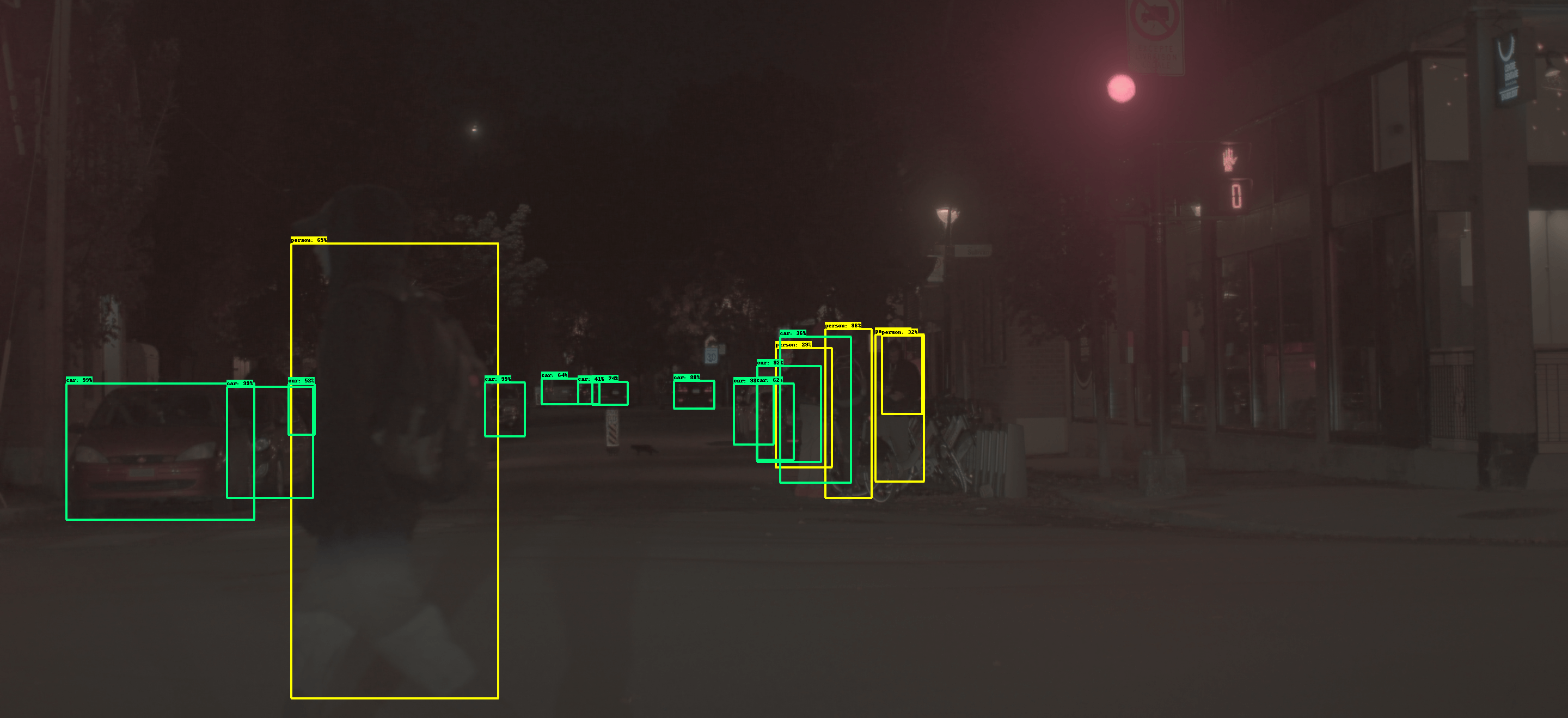

Expert-tuned

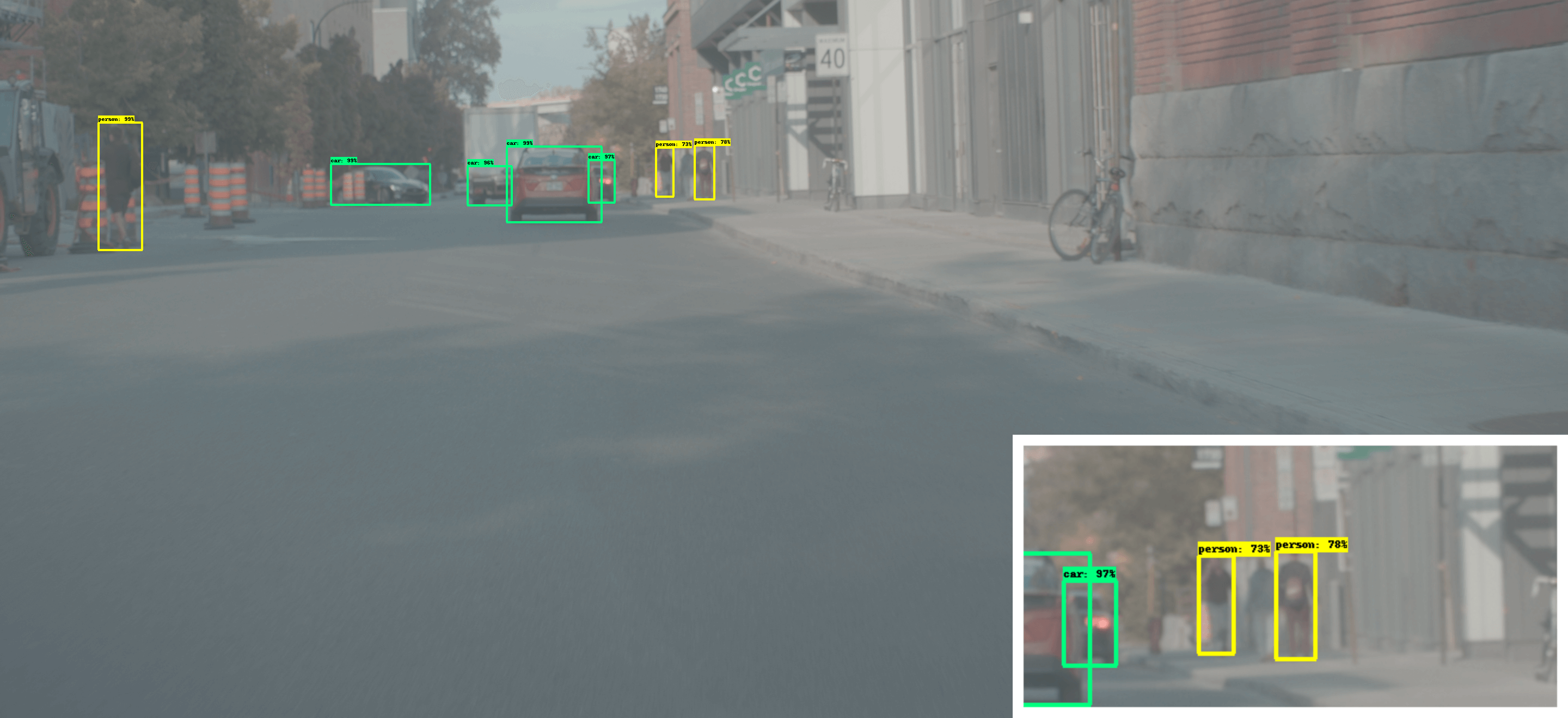

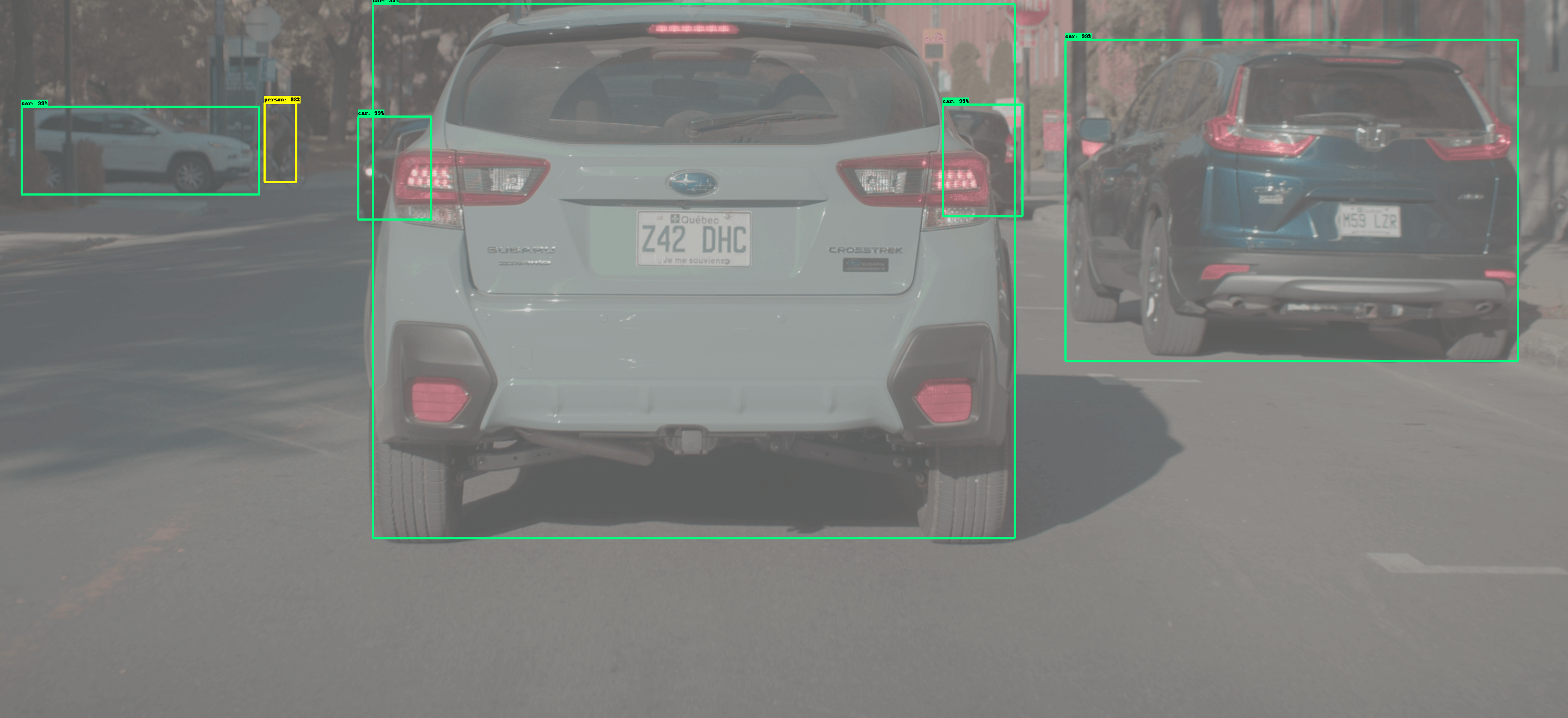

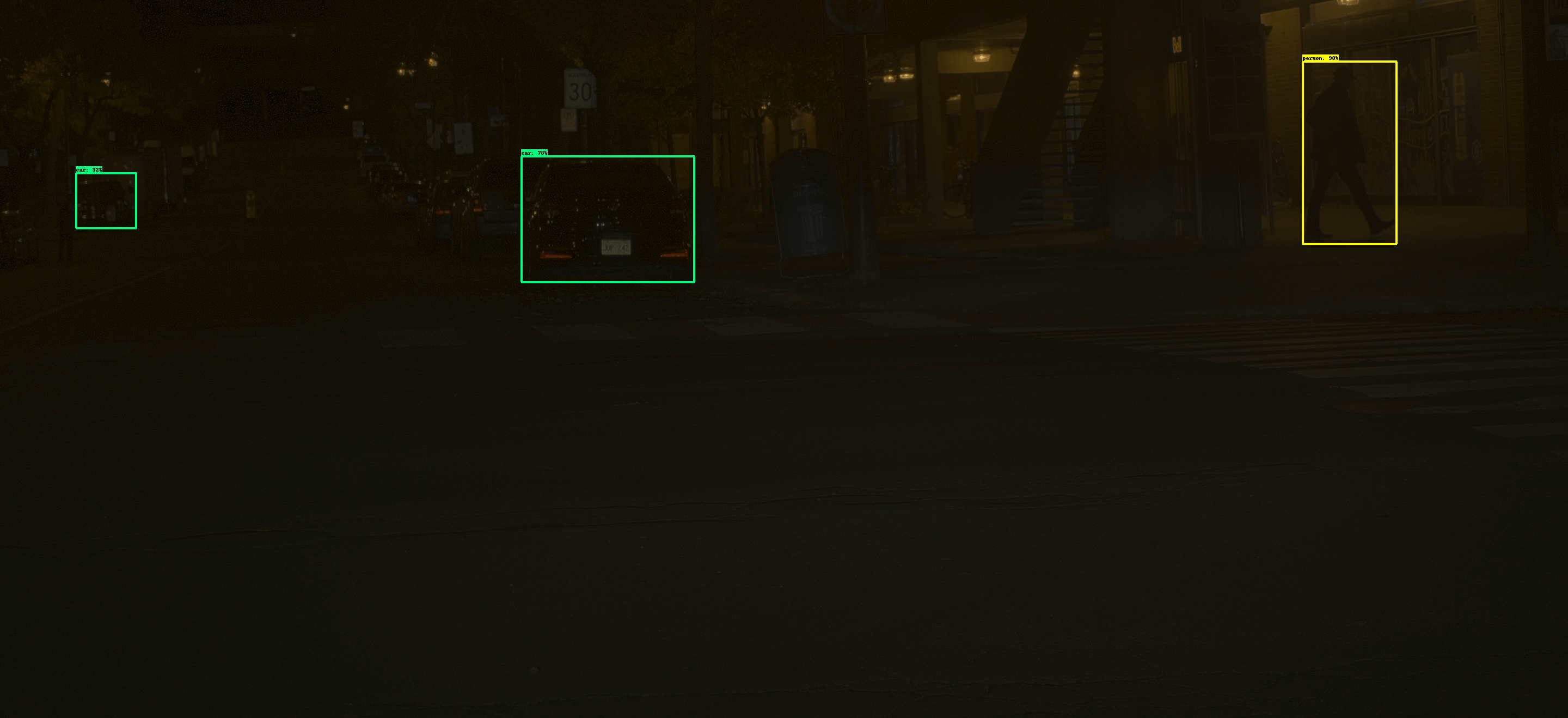

Mosleh et al. [1]

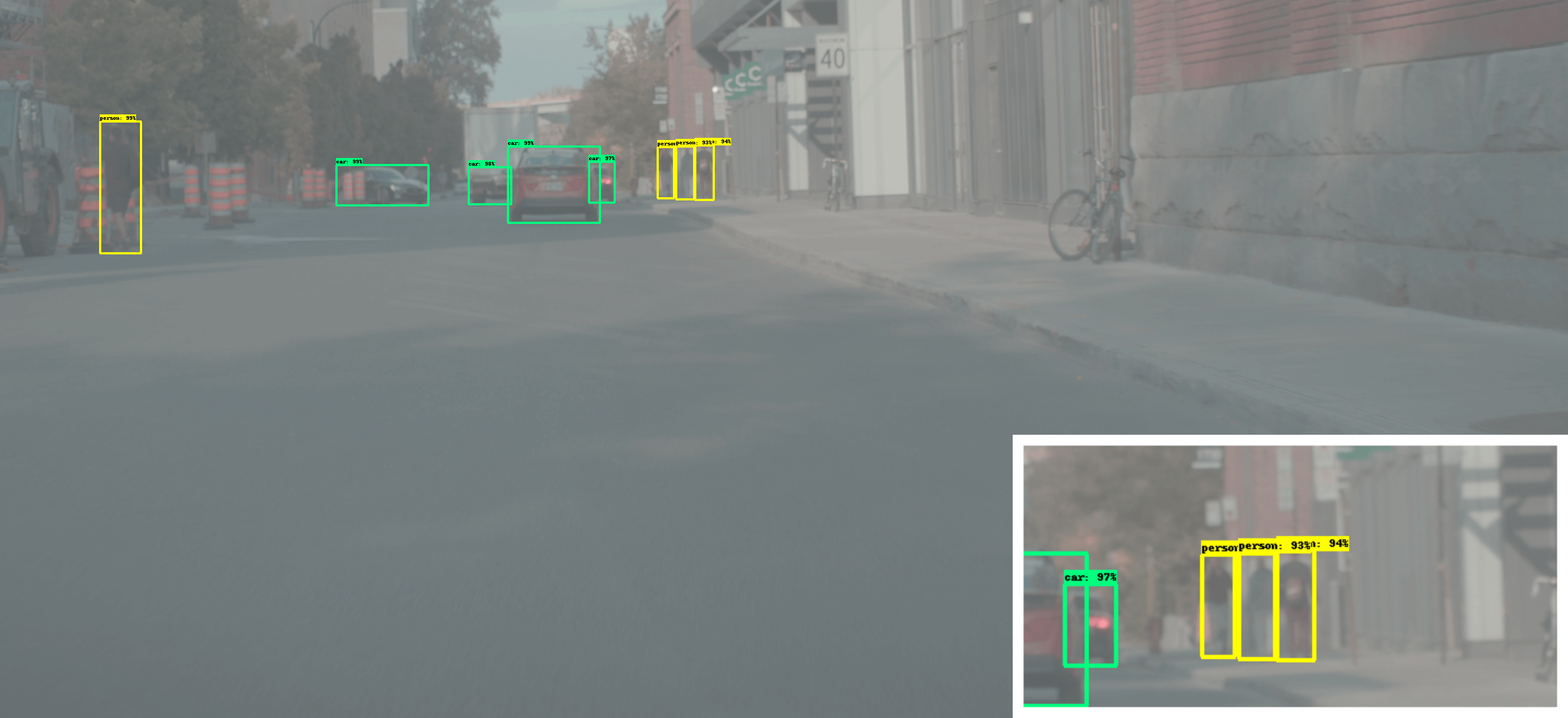

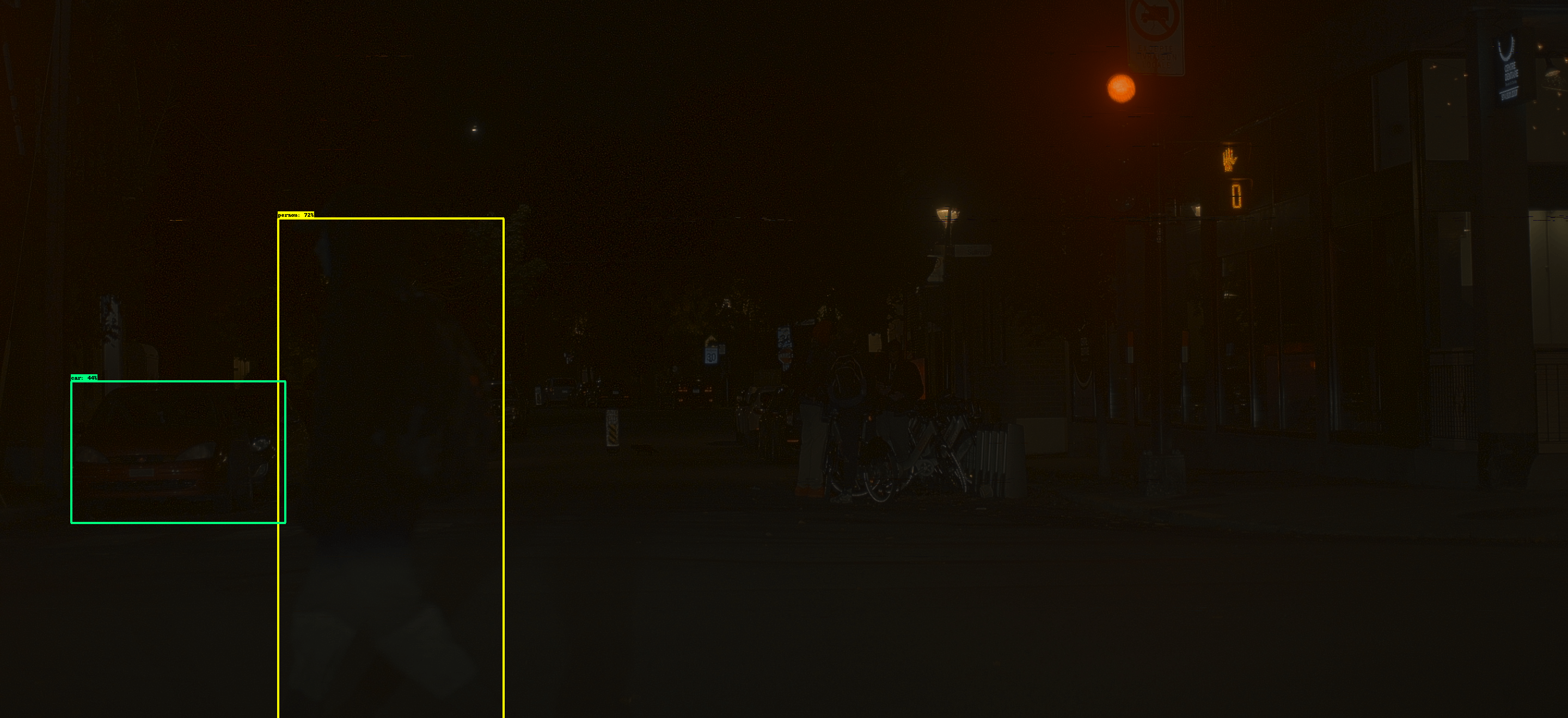

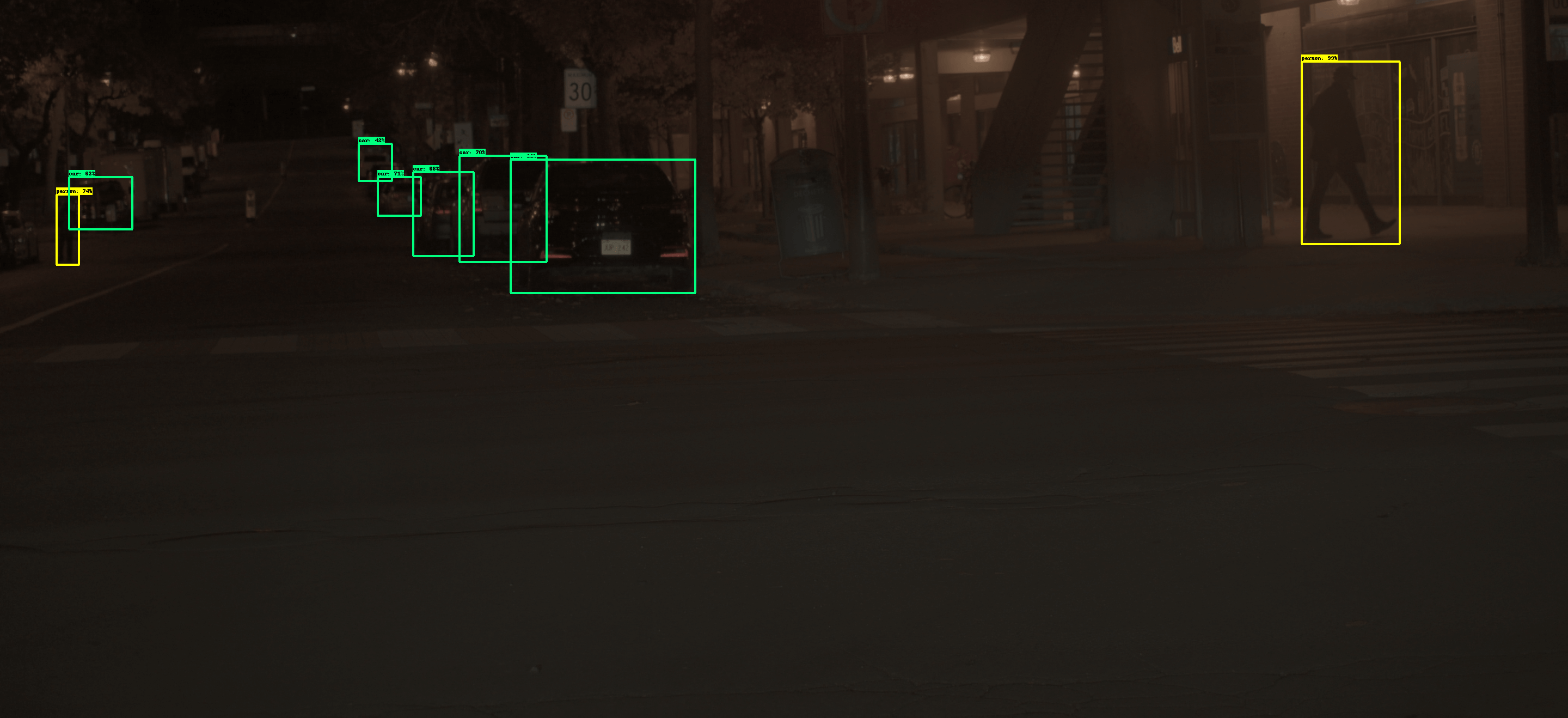

Proposed (1 iteration)

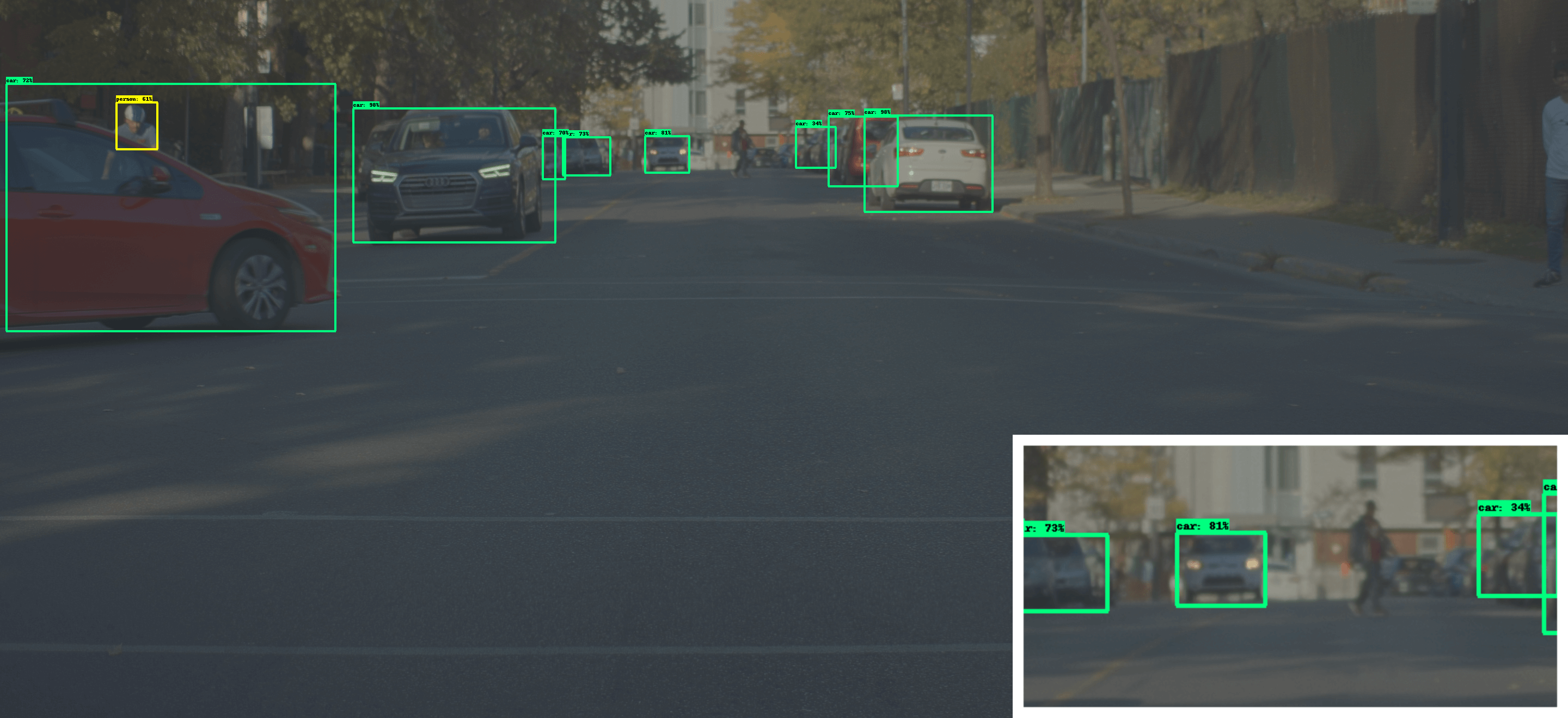

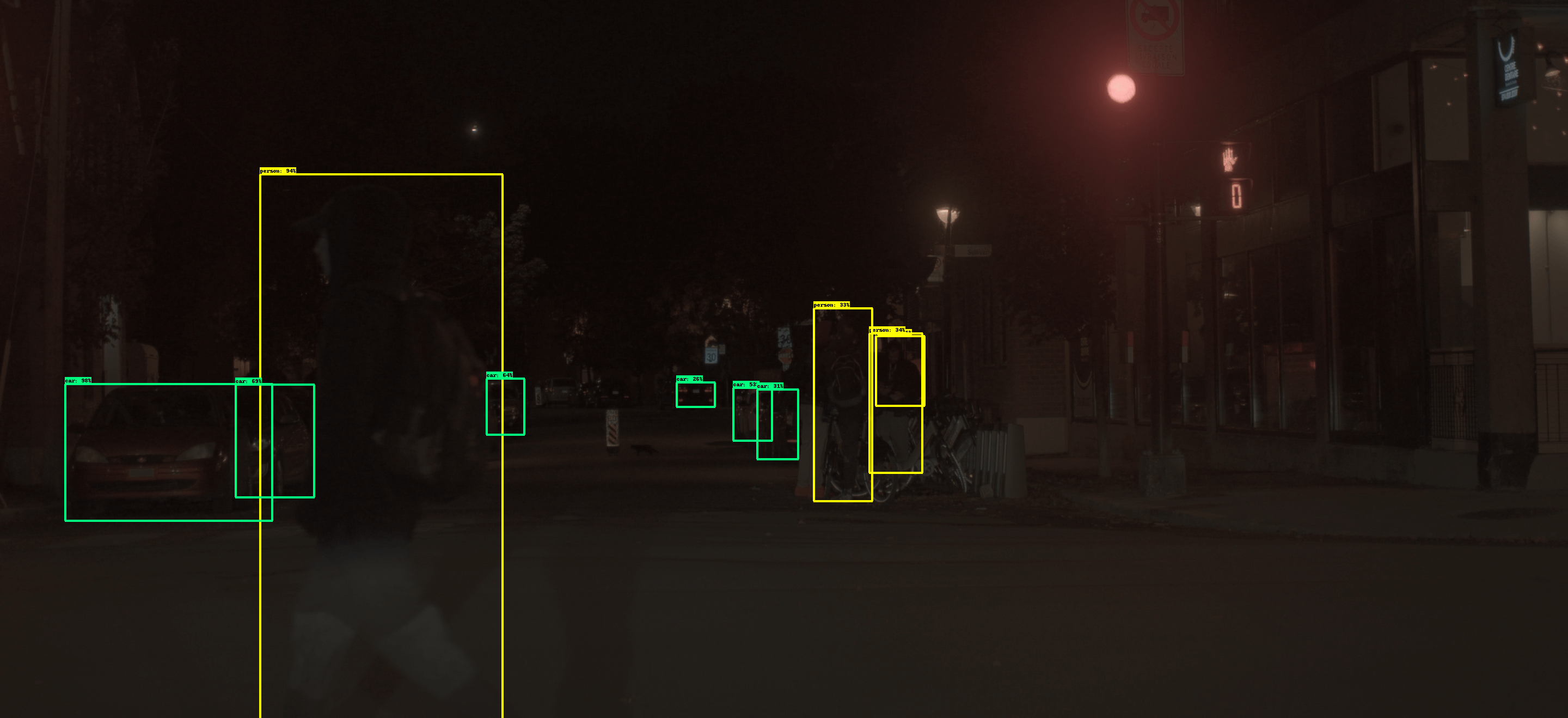

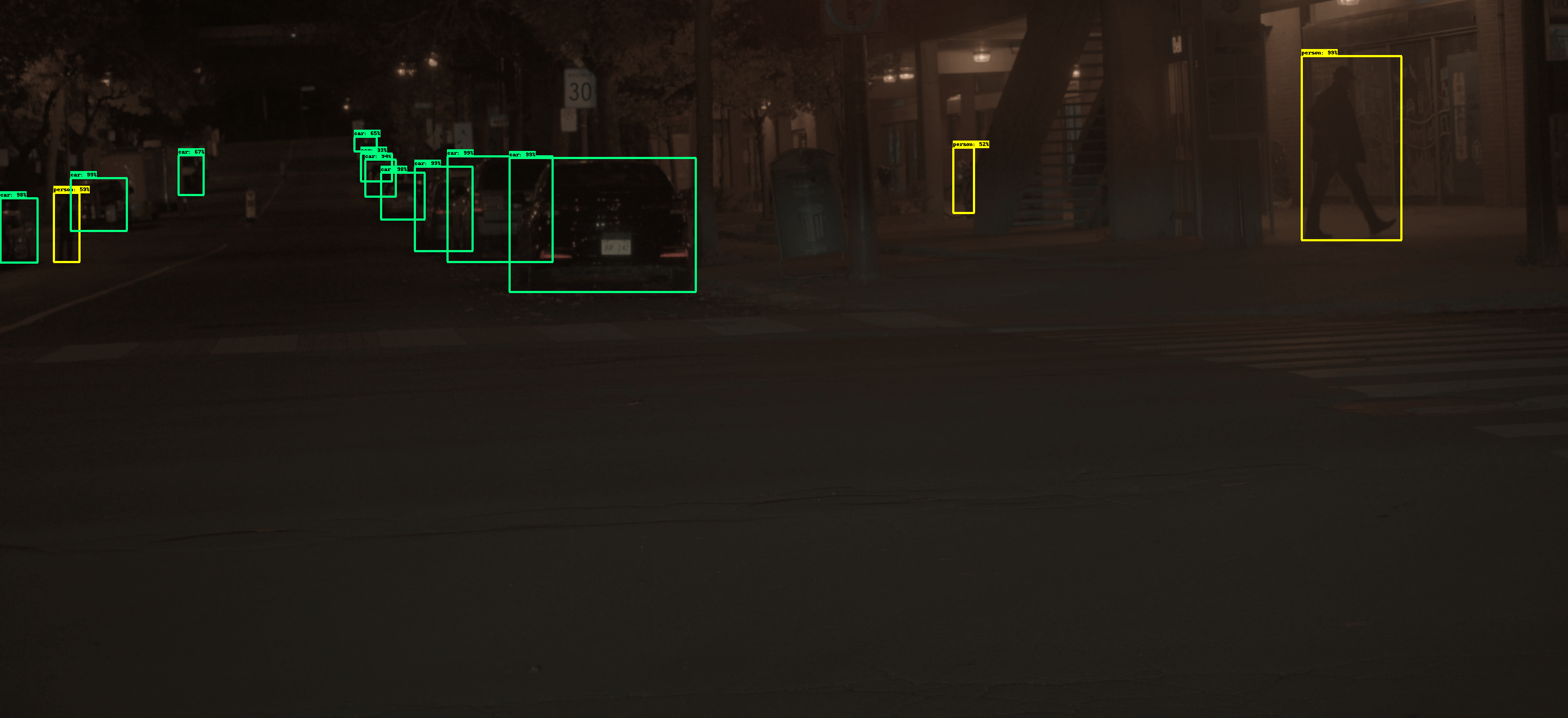

Proposed (converged)

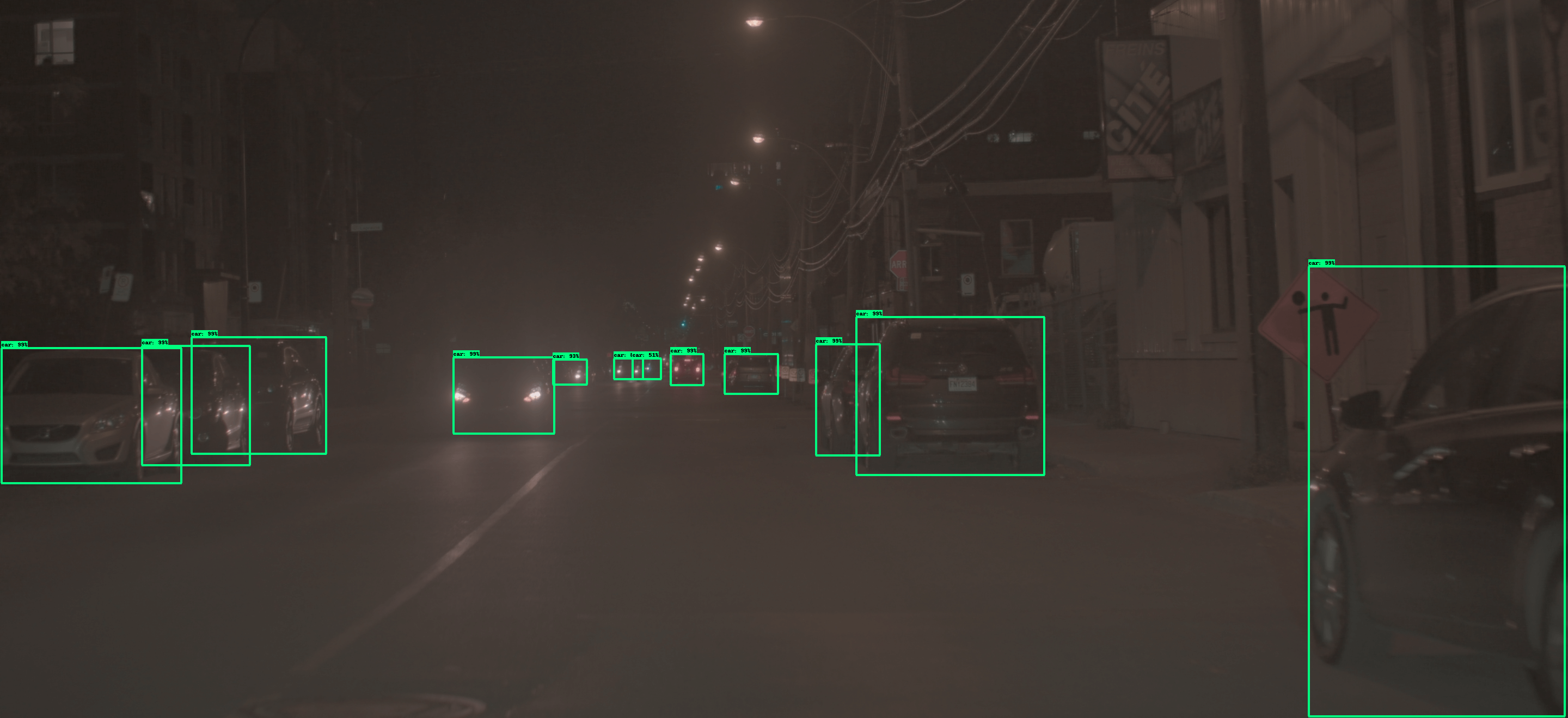

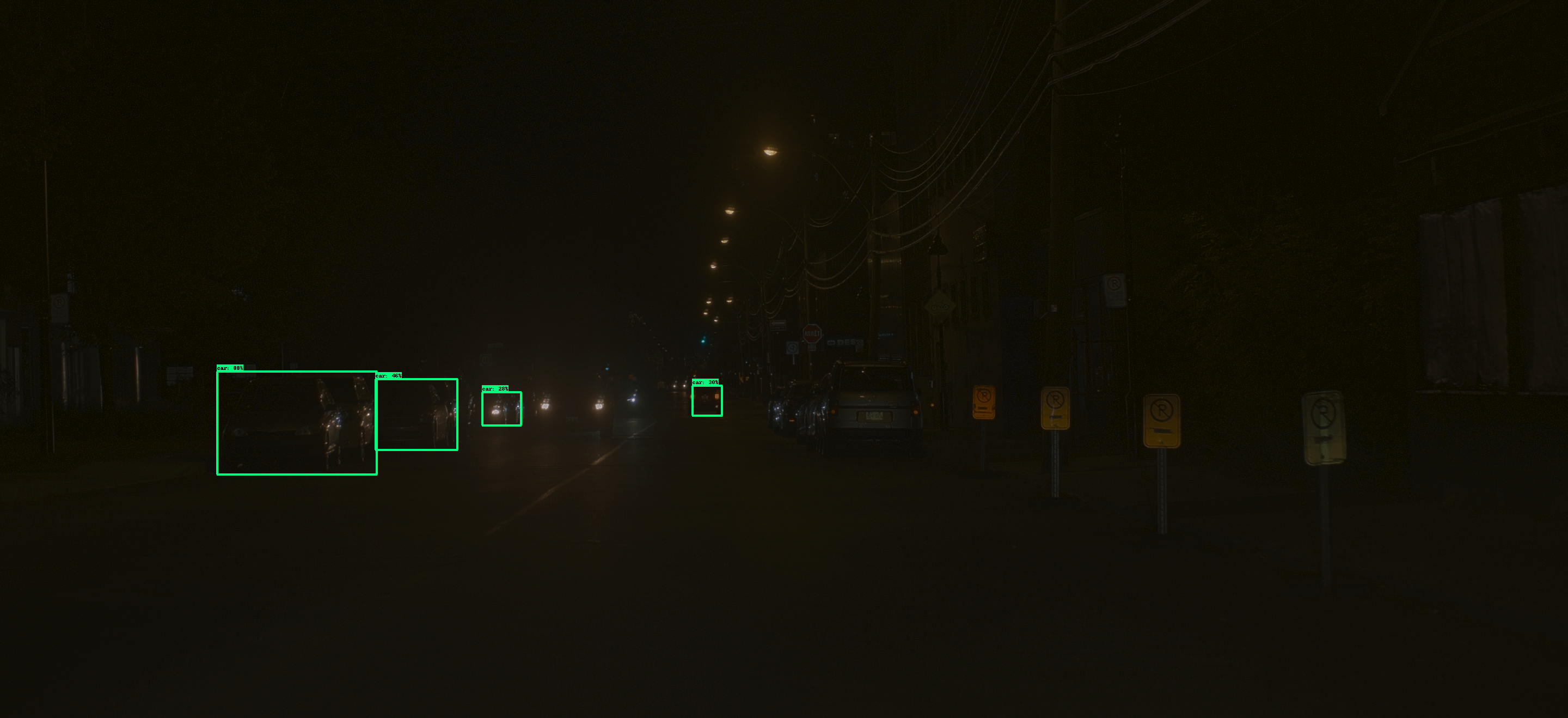

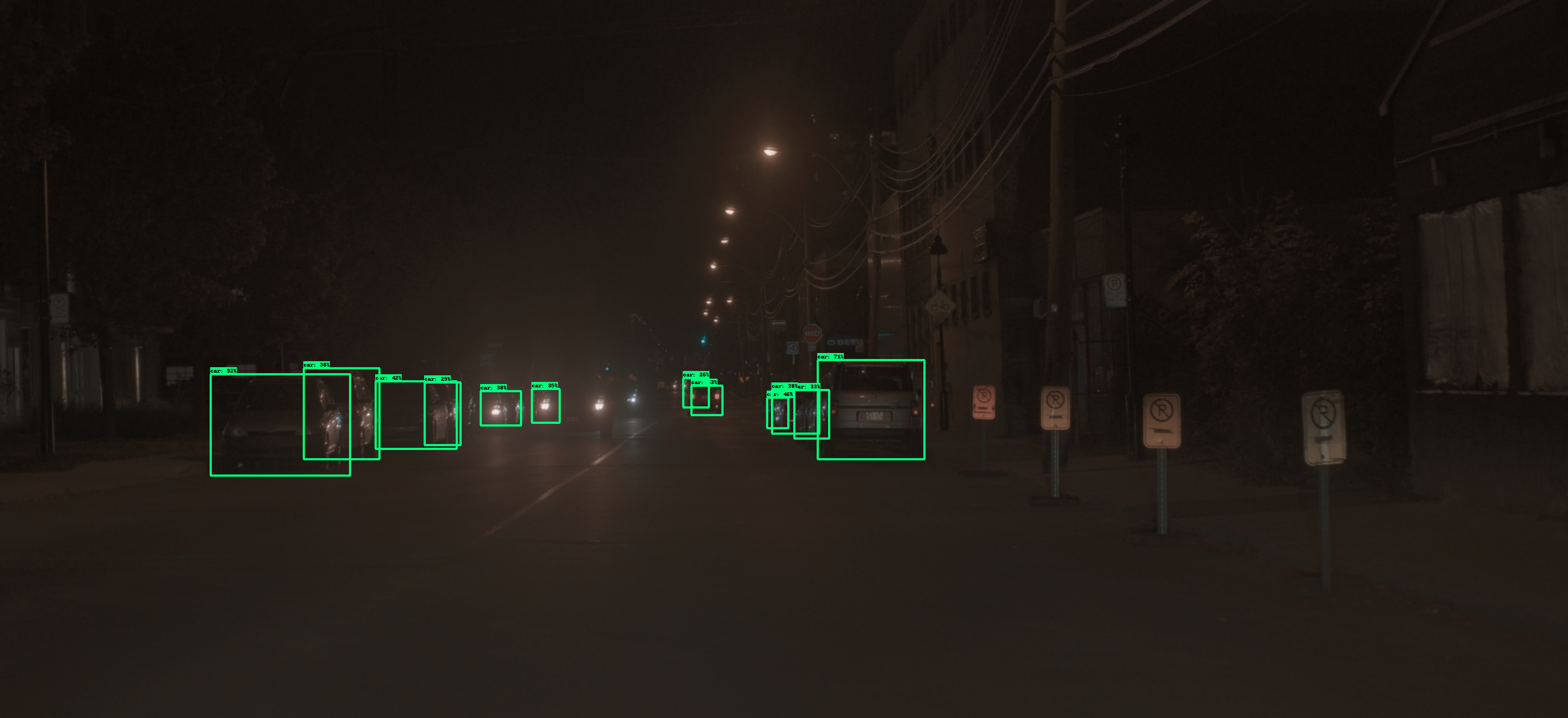

Results of joint sensor, ISP and CNN optimization for car and pedestrian detection with an automotive camera.

First column: Expert-tuned sensor and ISP with fine tuned image understanding CNN. Second column: Sensor and ISP optimized with Mosleh et al. [1] (extended to sensor optimization) with fine tuned CNN. Third column: One iteration of the proposed joint sensor, ISP and CNN optimization method. Fourth column: Converged results with the proposed joint sensor, ISP and CNN optimization method. By raising contrast within the 14-bit ISP output, the proposed method significantly outperforms both expert-tuned and Mosleh et al. [1]. (Zoom to see confidence scores and class labels.)

References.

[1] Ali Mosleh, Avinash Sharma, Emmanuel Onzon, Fahim Mannan, Nicolas Robidoux, and Felix Heide. Hardware-inthe-loop end-to-end optimization of camera image processing pipelines. In Proceedings of the IEEE/CVF Conferenceon Computer Vision and Pattern Recognition (CVPR), June 2020.