Gated2Depth: Real-time Dense Lidar from Gated Images

- Tobias Gruber

-

Frank Julca-Aguilar

- Mario Bijelic

- Felix Heide

ICCV 2019 (Oral)

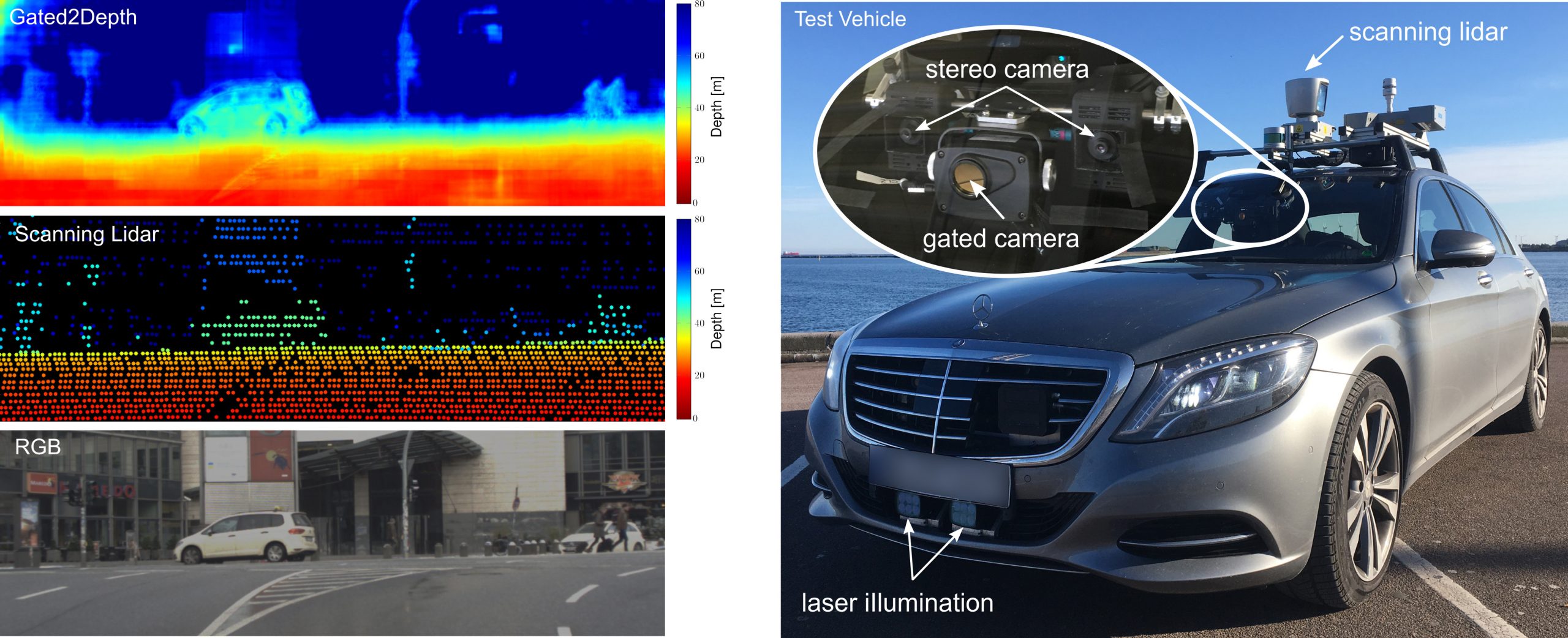

We demonstrate it is possible to recover high-resolution dense depth using flash NIR illumination and a modified CMOS camera without scanning mechanisms (top-left). Our method maps measurements from a flood-illuminated gated camera behind the wind-shield (inset right), captured in real-time, to dense depth maps with depth accuracy comparable to lidar measurements (center-left). In contrast to the sparse lidar measurements, these depth maps are high-resolution enabling semantic understanding at long ranges. We evaluate our method on synthetic and real data, collected with a testing and a scanning lidar Velodyne HDL64-S3D as reference (right).

We present an imaging framework which converts three images from a gated camera into high-resolution depth maps with depth accuracy comparable to pulsed lidar measurements. Existing scanning lidar systems achieve low spatial resolution at large ranges due to mechanically-limited angular sampling rates, restricting scene understanding tasks to close-range clusters with dense sampling. Moreover, today's pulsed lidar scanners suffer from high cost, power consumption, large form-factors, and they fail in the presence of strong backscatter. We depart from point scanning and demonstrate that it is possible to turn a low-cost CMOS gated imager into a dense depth camera with at least 80 m range – by learning depth from three gated images. The proposed architecture exploits semantic context across gated slices, and is trained on a synthetic discriminator loss without the need of dense depth labels. The proposed replacement for scanning lidar systems is real-time, handles back-scatter and provides dense depth at long ranges. We validate our approach in simulation and on real-world data acquired over 4,000 km driving in northern Europe.

Paper

Tobias Gruber, Frank Julca-Aguilar, Mario Bijelic, Felix Heide

Gated2Depth: Real-Time Dense Lidar From Gated Images

ICCV 2019 (oral)